- Как получить версию cuda?

- 11 ответов

- How to check which CUDA version is installed on Linux

- Check if CUDA is installed and it’s location with NVCC

- Get CUDA version from CUDA code

- Identifying which CUDA driver version is installed and active in the kernel

- Identifying which GPU card is installed and what version

- Troubleshooting

- How to Check CUDA Version on Ubuntu 18.04

- Prerequisite

- Method 1 — Use nvidia-smi from Nvidia Linux driver

- What is nvidia-smi?

- Method 2 — Use nvcc to check CUDA version on Ubuntu 18.04

- What is nvcc?

- Method 3 — cat /usr/local/cuda/version.txt

- 3 ways to check CUDA version on Ubuntu 18.04

- Linux check cuda version

- 1. Introduction

- 1.1. System Requirements

- 1.2. About This Document

- 2. Pre-installation Actions

- 2.1. Verify You Have a CUDA-Capable GPU

- 2.2. Verify You Have a Supported Version of Linux

- 2.3. Verify the System Has gcc Installed

- 2.4. Verify the System has the Correct Kernel Headers and Development Packages Installed

- RHEL7/CentOS7

- Fedora/RHEL8/CentOS8

- OpenSUSE/SLES

- Ubuntu

- 2.5. Install MLNX_OFED

- 2.6. Choose an Installation Method

- 2.7. Download the NVIDIA CUDA Toolkit

- Download Verification

- 2.8. Handle Conflicting Installation Methods

- 3. Package Manager Installation

- 3.1. Overview

- 3.2. RHEL7/CentOS7

- 3.3. RHEL8/CentOS8

- 3.4. Fedora

- 3.5. SLES

- 3.6. OpenSUSE

- 3.7. WSL

- 3.8. Ubuntu

- 3.9. Debian

- 3.10. Additional Package Manager Capabilities

- 3.10.1. Available Packages

- 3.10.2. Package Upgrades

- 3.10.3. Meta Packages

- 4. Driver Installation

- 5. Precompiled Streams

- 5.1. Precompiled Streams Support Matrix

- 5.2. Modularity Profiles

- 6. Kickstart Installation

- 6.1. RHEL8/CentOS8

- 7. Runfile Installation

- 7.1. Overview

- 7.2. Installation

- 7.3. Disabling Nouveau

- 7.3.1. Fedora

- 7.3.2. RHEL/CentOS

- 7.3.3. OpenSUSE

- 7.3.4. SLES

- 7.3.5. WSL

- 7.3.6. Ubuntu

- 7.3.7. Debian

- 7.4. Device Node Verification

- 7.5. Advanced Options

- 7.6. Uninstallation

- 8. Conda Installation

- 8.1. Conda Overview

- 8.2. Installation

- 8.3. Uninstallation

- 9. Pip Wheels

- 10. Tarball and Zip Archive Deliverables

- 10.1. Parsing Redistrib JSON

- 10.2. Importing into CMake

- 10.3. Importing into Bazel

- 11. CUDA Cross-Platform Environment

- 11.1. CUDA Cross-Platform Installation

- 11.2. CUDA Cross-Platform Samples

- TARGET_ARCH

- TARGET_OS

- TARGET_FS

- Cross Compiling to Embedded ARM architectures

- Copying Libraries

- 12. Post-installation Actions

- 12.1. Mandatory Actions

- 12.1.1. Environment Setup

- 12.1.2. POWER9 Setup

- 12.2. Recommended Actions

- 12.2.1. Install Persistence Daemon

- 12.2.2. Install Writable Samples

- 12.2.3. Verify the Installation

- 12.2.3.1. Verify the Driver Version

- 12.2.3.2. Compiling the Examples

- 12.2.3.3. Running the Binaries

- 12.2.4. Install Nsight Eclipse Plugins

- 12.3. Optional Actions

- 12.3.1. Install Third-party Libraries

- RHEL7/CentOS7

- RHEL8/CentOS8

- Fedora

- OpenSUSE

- Ubuntu

- Debian

- 12.3.2. Install the Source Code for cuda-gdb

- 12.3.3. Select the Active Version of CUDA

- 13. Advanced Setup

- 14. Frequently Asked Questions

- How do I install the Toolkit in a different location?

- Why do I see «nvcc: No such file or directory» when I try to build a CUDA application?

- Why do I see «error while loading shared libraries: : cannot open shared object file: No such file or directory» when I try to run a CUDA application that uses a CUDA library?

- Why do I see multiple «404 Not Found» errors when updating my repository meta-data on Ubuntu?

- How can I tell X to ignore a GPU for compute-only use?

- Why doesn’t the cuda-repo package install the CUDA Toolkit and Drivers?

- How do I get CUDA to work on a laptop with an iGPU and a dGPU running Ubuntu14.04?

- What do I do if the display does not load, or CUDA does not work, after performing a system update?

- How do I install a CUDA driver with a version less than 367 using a network repo?

- How do I install an older CUDA version using a network repo?

- Why does the installation on SUSE install the Mesa-dri-nouveau dependency?

- 15. Additional Considerations

- 16. Removing CUDA Toolkit and Driver

- RHEL8/CentOS8

- RHEL7/CentOS7

- Fedora

- OpenSUSE/SLES

- Ubuntu and Debian

- Notices

- Notice

Как получить версию cuda?

есть ли быстрая команда или скрипт для проверки версии установленного CUDA?

Я нашел руководство 4.0 в каталоге установки, но не уверен, является ли фактическая установленная версия такой или нет.

11 ответов

как упоминает Джаред в комментарии, из командной строки:

дает версию компилятора CUDA (которая соответствует версии toolkit).

из кода приложения вы можете запросить версию API среды выполнения с помощью

или версия API драйвера с

как указывает Даниэль, deviceQuery является образцом SDK приложение, которое запрашивает выше, наряду с возможностями устройства.

как отмечают другие, вы также можете проверить содержание version.txt использование (например, на Mac или Linux)

однако, если установлена другая версия инструментария CUDA, отличная от той, которая символически связана с /usr/local/cuda , это может сообщить о неточной версии, если другая версия ранее в вашем PATH чем выше, поэтому используйте с осторожностью.

На Ubuntu Cuda V8:

$ cat /usr/local/cuda/version.txt или $ cat /usr/local/cuda-8.0/version.txt

иногда папка называется «Cuda-version».

если ничего из вышеперечисленного не работает, попробуйте $ /usr/local/ И найдите правильное имя вашей папки Cuda.

результат должен быть похож на: CUDA Version 8.0.61

Если вы установили CUDA SDK, вы можете запустить «deviceQuery», чтобы увидеть версию CUDA

вы можете найти CUDA-Z полезным, вот цитата с их сайта:

» эта программа родилась как пародия на другие Z-утилиты, такие как CPU-Z и GPU-Z. CUDA-Z показывает некоторую базовую информацию о графических процессорах с поддержкой CUDA и GPGPUs. Он работает с картами nVIDIA Geforce, Quadro и Tesla, ионными чипсетами.»

на вкладке поддержка есть URL для исходного кода: http://sourceforge.net/p/cuda-z/code/ и загрузка на самом деле не является установщиком, а исполняемым файлом (без установки, поэтому это «быстро»).

эта утилита предоставляет множество информации, и если вам нужно знать, как она была получена, есть источник посмотреть. Есть другие утилиты, похожие на это, которые вы можете искать.

после установки CUDA можно проверить версии по: nvcc-V

Я установил как 5.0, так и 5.5, поэтому он дает

инструменты компиляции Cuda, выпуск 5.5, V5.5,0

эта команда работает как для Windows, так и для Ubuntu.

помимо упомянутых выше, ваш путь установки CUDA (если он не был изменен во время установки) обычно содержит номер версии

делать which nvcc должны дать путь, и это даст вам версию

PS: Это быстрый и грязный способ, вышеуказанные ответы более элегантны и приведут к правильной версии со значительными усилиями

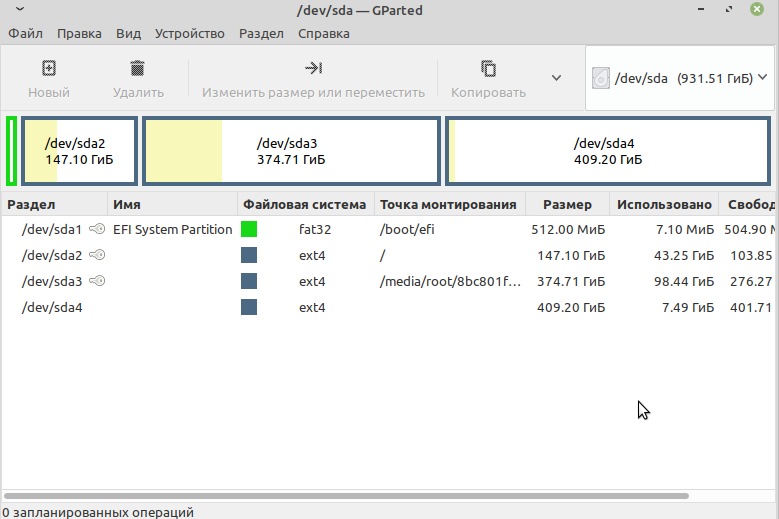

сначала вы должны найти, где установлена Cuda.

Если это установка по умолчанию, такие как здесь расположение должно быть:

в этой папке должен быть файл

откройте этот файл с помощью любого текстового редактора или запустите:

можно узнать cuda версия, набрав в терминале следующее:

кроме того, можно вручную проверьте версию, сначала выяснив каталог установки с помощью:

а то cd в этот каталог и проверьте версию CUDA.

Я получаю /usr / local — нет такого файла или каталога. Хотя nvcc -V дает

для версии CUDA:

для версии cuDNN:

используйте следующее, чтобы найти путь для cuDNN:

затем используйте это, чтобы получить версию из файла заголовка,

используйте следующее, чтобы найти путь для cuDNN:

затем используйте это, чтобы сбросить версию из файла заголовка,

Источник

How to check which CUDA version is installed on Linux

By Arnon Shimoni

Post date

There are several ways and steps you could check which CUDA version is installed on your Linux box.

Check if CUDA is installed and it’s location with NVCC

Run which nvcc to find if nvcc is installed properly.

You should see something like /usr/bin/nvcc. If that appears, your NVCC is installed in the standard directory.

If you have installed the CUDA toolkit but which nvcc returns no results, you might need to add the directory to your path.

You can check nvcc --version to get the CUDA compiler version, which matches the toolkit version:

This means that we have CUDA version 8.0.61 installed.

Note that if the nvcc version doesn’t match the driver version, you may have multiple nvcc s in your PATH . Figure out which one is the relevant one for you, and modify the environment variables to match, or get rid of the older versions.

Get CUDA version from CUDA code

When you’re writing your own code, figuring out how to check the CUDA version, including capabilities is often accomplished with the cudaDriverGetVersion () API call.

The API call gets the CUDA version from the active driver, currently loaded in Linux or Windows.

Identifying which CUDA driver version is installed and active in the kernel

You can also use the kernel to run a CUDA version check:

Identifying which GPU card is installed and what version

In many cases, I just use nvidia-smi to check the CUDA version on CentOS and Ubuntu.

For me, nvidia-smi is the most straight-forward and simplest way to get a holistic view of everything – both GPU card model and driver version, as well as some additional information like the topology of the cards on the PCIe bus, temperatures, memory utilization, and more.

The driver version is 367.48 as seen below, and the cards are two Tesla K40m.

Troubleshooting

After installing a new version of CUDA, there are some situations that require rebooting the machine to have the driver versions load properly. It is my recommendation to reboot after performing the kernel-headers upgrade/install process, and after installing CUDA – to verify that everything is loaded correctly.

Источник

How to Check CUDA Version on Ubuntu 18.04

Here you will learn how to check CUDA version on Ubuntu 18.04. The 3 methods are NVIDIA driver’s nvidia-smi , CUDA toolkit’s nvcc , and simply checking a file.

» class=»wp_ulike_btn wp_ulike_put_image wp_post_btn_3132″>

Prerequisite

Before we start, you should have installed NVIDIA driver on your system as well as Nvidia CUDA toolkit.

Method 1 — Use nvidia-smi from Nvidia Linux driver

The first way to check CUDA version is to run nvidia-smi that comes from your Ubuntu 18.04’s NVIDIA driver, specifically the NVIDIA-utils package. You can install either Nvidia driver from the official repository of Ubuntu, or from the NVIDIA website.

To use nvidia-smi to check your CUDA version on Ubuntu 18.04, directly run from command line

You will see similar output to the screenshot below. The details about the CUDA version is to the top right of the output. My version is 10.2 here. Whether you have 10.0, 10.1 or even the older 9.0 installed, it will differ.

Surprisingly, except for the CUDA version, you can also find more detail from nvidia-smi, such as driver version (440.64), GPU name, GPU fan ratio, power consumption / capacity, memory usage. Also you can find the processes that actually use the GPU.

Here is the full text output:

What is nvidia-smi?

nvidia-smi (NVSMI) is NVIDIA System Management Interface program. It is also known as NVSMI. nvidia-smi provides tracking and maintenance features for all of the Tesla, Quadro, GRID and GeForce NVIDIA GPUs and higher architectural families in Fermi. For most functions, GeForce Titan Series products are supported with only a limited amount of detail provided for the rest of the Geforce range.

NVSMI is also a cross-platform program which supports all common NVIDIA driver-supported Linux distros and 64-bit versions of Windows starting with Windows Server 2008 R2. Metrics can be used by users directly via stdout, or saved in CSV and XML formats for scripting purposes.

For more information, check out nvidia-smi ‘s manpage.

Method 2 — Use nvcc to check CUDA version on Ubuntu 18.04

If you have installed the cuda-toolkit package either from Ubuntu 18.04’s or NVIDIA’s official Ubuntu 18.04 repository through sudo apt install nvidia-cuda-toolkit , or by downloading from NVIDIA’s official website and install it manually, you will have nvcc in your path ( $PATH ) and its location would be /usr/bin/nvcc (by running which nvcc ).

To check the CUDA version with nvcc on Ubuntu 18.04, execute

Different output can be seen in the screenshot below. The last line reveals a version of your CUDA version. This version here is 10.1. Yours may vary, and may be 10.0 or 10.2. You will see the full text output after the screenshot too.

What is nvcc?

nvcc is the NVIDIA CUDA Compiler, thus the name. It is the main wrapper for the CUDA compiler suite. For the other use of nvcc , you can use it to compile and link both host and GPU code.

Check out the manpage of nvcc for more information.

Method 3 — cat /usr/local/cuda/version.txt

Note that this method might not work on Ubuntu 18.04 if you install Nvidia driver and CUDA from Ubuntu 18.04’s own official repository.

3 ways to check CUDA version on Ubuntu 18.04

Time Needed : 5 minutes

There are three ways to identify the CUDA version on Ubuntu 18.04.

- The best way is by the NVIDIA driver’s nvidia-smi command you may have installed.

Simply run nvidia-smi

A simpler way is possibly to test a file, but this may not work on Ubuntu 18.04

Run cat /usr/local/cuda/version.txt

Another approach is through the cuda-toolkit command nvcc.

nvcc –version

Источник

Linux check cuda version

The installation instructions for the CUDA Toolkit on Linux.

1. Introduction

CUDA В® is a parallel computing platform and programming model invented by NVIDIA В® . It enables dramatic increases in computing performance by harnessing the power of the graphics processing unit (GPU).

This guide will show you how to install and check the correct operation of the CUDA development tools.

1.1. System Requirements

The CUDA development environment relies on tight integration with the host development environment, including the host compiler and C runtime libraries, and is therefore only supported on distribution versions that have been qualified for this CUDA Toolkit release.

The following table lists the supported Linux distributions. Please review the footnotes associated with the table.

| Distribution | Kernel 1 | Default GCC | GLIBC | GCC 2,3 | ICC 3 | NVHPC 3 | XLC 3 | CLANG | Arm C/C++ |

|---|---|---|---|---|---|---|---|---|---|

| x86_64 | |||||||||

| RHEL 8.y (y 4 | |||||||||

| RHEL 8.4 | 4.18.0-305 | 8.4.1 | 2.28 | 11 | NO | 19.x, 20.x | NO | 11.0 | 21.0 |

| SUSE SLES 15.y (y 4 | |||||||||

| Ubuntu 18.04.z (z 4 | |||||||||

| RHEL 8.y (y 11.4 . Visit https://wiki.ubuntu.com/Kernel/Support for more information. | |||||||||

(2) Note that starting with CUDA 11.0, the minimum recommended GCC compiler is at least GCC 6 due to C++11 requirements in CUDA libraries e.g. cuFFT and CUB. On distributions such as RHEL 7 or CentOS 7 that may use an older GCC toolchain by default, it is recommended to use a newer GCC toolchain with CUDA 11.0. Newer GCC toolchains are available with the Red Hat Developer Toolset. For platforms that ship a compiler version older than GCC 6 by default, linking to static cuBLAS and cuDNN using the default compiler is not supported.

(3) Minor versions of the following compilers listed: of GCC, ICC, NVHPC and XLC, as host compilers for nvcc are supported.

(4) Only Tesla V100 and T4 GPUs are supported for CUDA 11.4 on Arm64 ( aarch64 ) POWER9 ( ppc64le ).

1.2. About This Document

This document is intended for readers familiar with the Linux environment and the compilation of C programs from the command line. You do not need previous experience with CUDA or experience with parallel computation. Note: This guide covers installation only on systems with X Windows installed.

2. Pre-installation Actions

2.1. Verify You Have a CUDA-Capable GPU

To verify that your GPU is CUDA-capable, go to your distribution’s equivalent of System Properties, or, from the command line, enter:

If you do not see any settings, update the PCI hardware database that Linux maintains by entering update-pciids (generally found in /sbin ) at the command line and rerun the previous lspci command.

If your graphics card is from NVIDIA and it is listed in https://developer.nvidia.com/cuda-gpus, your GPU is CUDA-capable.

The Release Notes for the CUDA Toolkit also contain a list of supported products.

2.2. Verify You Have a Supported Version of Linux

The CUDA Development Tools are only supported on some specific distributions of Linux. These are listed in the CUDA Toolkit release notes.

To determine which distribution and release number you’re running, type the following at the command line:

You should see output similar to the following, modified for your particular system:

The x86_64 line indicates you are running on a 64-bit system. The remainder gives information about your distribution.

2.3. Verify the System Has gcc Installed

The gcc compiler is required for development using the CUDA Toolkit. It is not required for running CUDA applications. It is generally installed as part of the Linux installation, and in most cases the version of gcc installed with a supported version of Linux will work correctly.

To verify the version of gcc installed on your system, type the following on the command line:

If an error message displays, you need to install the from your Linux distribution or obtain a version of gcc and its accompanying toolchain from the Web.

2.4. Verify the System has the Correct Kernel Headers and Development Packages Installed

The CUDA Driver requires that the kernel headers and development packages for the running version of the kernel be installed at the time of the driver installation, as well whenever the driver is rebuilt. For example, if your system is running kernel version 3.17.4-301, the 3.17.4-301 kernel headers and development packages must also be installed.

While the Runfile installation performs no package validation, the RPM and Deb installations of the driver will make an attempt to install the kernel header and development packages if no version of these packages is currently installed. However, it will install the latest version of these packages, which may or may not match the version of the kernel your system is using. Therefore, it is best to manually ensure the correct version of the kernel headers and development packages are installed prior to installing the CUDA Drivers, as well as whenever you change the kernel version.

RHEL7/CentOS7

Fedora/RHEL8/CentOS8

OpenSUSE/SLES

The kernel development packages for the currently running kernel can be installed with:

This section does not need to be performed for WSL.

Ubuntu

2.5. Install MLNX_OFED

GDS is supported in two different modes: GDS (default/full perf mode) and Compatibility mode. Installation instructions for them differ slightly. Compatibility mode is the only mode that is supported on certain distributions due to software dependency limitations.

Full GDS support is restricted to the following Linux distros:

- Ubuntu 18.04, 20.04

- RHEL 8.3, RHEL 8.4

2.6. Choose an Installation Method

The CUDA Toolkit can be installed using either of two different installation mechanisms: distribution-specific packages (RPM and Deb packages), or a distribution-independent package (runfile packages).

The distribution-independent package has the advantage of working across a wider set of Linux distributions, but does not update the distribution’s native package management system. The distribution-specific packages interface with the distribution’s native package management system. It is recommended to use the distribution-specific packages, where possible.

2.7. Download the NVIDIA CUDA Toolkit

Choose the platform you are using and download the NVIDIA CUDA Toolkit.

The CUDA Toolkit contains the CUDA driver and tools needed to create, build and run a CUDA application as well as libraries, header files, CUDA samples source code, and other resources.

Download Verification

The download can be verified by comparing the MD5 checksum posted at https://developer.download.nvidia.com/compute/cuda/11.4.2/docs/sidebar/md5sum.txt with that of the downloaded file. If either of the checksums differ, the downloaded file is corrupt and needs to be downloaded again.

2.8. Handle Conflicting Installation Methods

Before installing CUDA, any previously installations that could conflict should be uninstalled. This will not affect systems which have not had CUDA installed previously, or systems where the installation method has been preserved (RPM/Deb vs. Runfile). See the following charts for specifics.

| Installed Toolkit Version == X.Y | Installed Toolkit Version != X.Y | ||||

| RPM/Deb | run | RPM/Deb | run | ||

| Installing Toolkit Version X.Y | RPM/Deb | No Action | Uninstall Run | No Action | No Action |

| run | Uninstall RPM/Deb | Uninstall Run | No Action | No Action | |

| Installed Driver Version == X.Y | Installed Driver Version != X.Y | ||||

| RPM/Deb | run | RPM/Deb | run | ||

| Installing Driver Version X.Y | RPM/Deb | No Action | Uninstall Run | No Action | Uninstall Run |

| run | Uninstall RPM/Deb | No Action | Uninstall RPM/Deb | No Action | |

3. Package Manager Installation

Basic instructions can be found in the Quick Start Guide. Read on for more detailed instructions.

3.1. Overview

The Package Manager installation interfaces with your system’s package management system. When using RPM or Deb, the downloaded package is a repository package. Such a package only informs the package manager where to find the actual installation packages, but will not install them.

If those packages are available in an online repository, they will be automatically downloaded in a later step. Otherwise, the repository package also installs a local repository containing the installation packages on the system. Whether the repository is available online or installed locally, the installation procedure is identical and made of several steps.

Finally, some helpful package manager capabilities are detailed.

These instructions are for native development only. For cross-platform development, see the CUDA Cross-Platform Environment section.

3.2. RHEL7/CentOS7

- Perform the pre-installation actions.

- Satisfy third-party package dependency

- Satisfy DKMS dependency: The NVIDIA driver RPM packages depend on other external packages, such as DKMS and libvdpau . Those packages are only available on third-party repositories, such as EPEL. Any such third-party repositories must be added to the package manager repository database before installing the NVIDIA driver RPM packages, or missing dependencies will prevent the installation from proceeding.

On RHEL 7 Linux only, execute the following steps to enable optional repositories.

The driver relies on an automatically generated xorg.conf file at /etc/X11/xorg.conf . If a custom-built xorg.conf file is present, this functionality will be disabled and the driver may not work. You can try removing the existing xorg.conf file, or adding the contents of /etc/X11/xorg.conf.d/00-nvidia.conf to the xorg.conf file. The xorg.conf file will most likely need manual tweaking for systems with a non-trivial GPU configuration.

Install repository meta-data

When installing using the local repo:

When installing using the network repo:

The libcuda.so library is installed in the /usr/lib<,64>/nvidia directory. For pre-existing projects which use libcuda.so, it may be useful to add a symbolic link from libcuda.so in the /usr/lib <,64>directory.

3.3. RHEL8/CentOS8

- Perform the pre-installation actions.

- Satisfy third-party package dependency

- Satisfy DKMS dependency: The NVIDIA driver RPM packages depend on other external packages, such as DKMS and libvdpau . Those packages are only available on third-party repositories, such as EPEL. Any such third-party repositories must be added to the package manager repository database before installing the NVIDIA driver RPM packages, or missing dependencies will prevent the installation from proceeding.

On RHEL 8 Linux only, execute the following steps to enable optional repositories.

The driver relies on an automatically generated xorg.conf file at /etc/X11/xorg.conf . If a custom-built xorg.conf file is present, this functionality will be disabled and the driver may not work. You can try removing the existing xorg.conf file, or adding the contents of /etc/X11/xorg.conf.d/00-nvidia.conf to the xorg.conf file. The xorg.conf file will most likely need manual tweaking for systems with a non-trivial GPU configuration.

Install repository meta-data

When installing using the local repo:

The libcuda.so library is installed in the /usr/lib<,64>/nvidia directory. For pre-existing projects which use libcuda.so, it may be useful to add a symbolic link from libcuda.so in the /usr/lib <,64>directory.

3.4. Fedora

- Perform the pre-installation actions.

- Address custom xorg.conf, if applicable

The driver relies on an automatically generated xorg.conf file at /etc/X11/xorg.conf . If a custom-built xorg.conf file is present, this functionality will be disabled and the driver may not work. You can try removing the existing xorg.conf file, or adding the contents of /etc/X11/xorg.conf.d/00-nvidia .conf to the xorg.conf file. The xorg.conf file will most likely need manual tweaking for systems with a non-trivial GPU configuration.

Install repository meta-data

When installing using the local repo:

The libcuda.so library is installed in the /usr/lib<,64>/nvidia directory. For pre-existing projects which use libcuda.so, it may be useful to add a symbolic link from libcuda.so in the /usr/lib <,64>directory.

3.5. SLES

- Perform the pre-installation actions.

- On SLES12 SP4, install the Mesa-libgl-devel Linux packages before proceeding. See Mesa-libGL-devel.

- Install repository meta-data

When installing using the local repo:

When installing using the network repo:

Refresh Zypper repository cache

The CUDA Samples package on SLES does not include dependencies on GL and X11 libraries as these are provided in the SLES SDK. These packages must be installed separately, depending on which samples you want to use.

3.6. OpenSUSE

- Perform the pre-installation actions.

- Install repository meta-data

When installing using the local repo:

When installing using the network repo:

3.7. WSL

These instructions must be used if you are installing in a WSL environment. Do not use the Ubuntu instructions in this case.

When installing using the local repo:

When installing using the local repo:

When installing using the network repo:

Pin file to prioritize CUDA repository:

Update the Apt repository cache

3.8. Ubuntu

- Perform the pre-installation actions.

- Install repository meta-data

When installing using the local repo:

When installing using network repo on Ubuntu 20.04/18.04:

When installing using network repo on Ubuntu 16.04:

Pin file to prioritize CUDA repository:

Update the Apt repository cache

3.9. Debian

Install repository meta-data

When installing using the local repo:

When installing using the local repo:

When installing using the network repo:

Update the Apt repository cache

3.10. Additional Package Manager Capabilities

Below are some additional capabilities of the package manager that users can take advantage of.

3.10.1. Available Packages

The recommended installation package is the cuda package. This package will install the full set of other CUDA packages required for native development and should cover most scenarios.

The cuda package installs all the available packages for native developments. That includes the compiler, the debugger, the profiler, the math libraries, and so on. For x86_64 patforms, this also include Nsight Eclipse Edition and the visual profilers. It also includes the NVIDIA driver package.

On supported platforms, the cuda-cross-aarch64 and cuda-cross-ppc64el packages install all the packages required for cross-platform development to ARMv8 and POWER8, respectively. The libraries and header files of the target architecture’s display driver package are also installed to enable the cross compilation of driver applications. The cuda-cross- packages do not install the native display driver.

The packages installed by the packages above can also be installed individually by specifying their names explicitly. The list of available packages be can obtained with:

3.10.2. Package Upgrades

The cuda package points to the latest stable release of the CUDA Toolkit. When a new version is available, use the following commands to upgrade the toolkit and driver:

The cuda-drivers package points to the latest driver release available in the CUDA repository. When a new version is available, use the following commands to upgrade the driver:

Some desktop environments, such as GNOME or KDE, will display an notification alert when new packages are available.

To avoid any automatic upgrade, and lock down the toolkit installation to the X.Y release, install the cuda-X-Y or cuda-cross—X-Y package.

Side-by-side installations are supported. For instance, to install both the X.Y CUDA Toolkit and the X.Y+1 CUDA Toolkit, install the cuda-X.Y and cuda-X.Y+1 packages.

3.10.3. Meta Packages

Meta packages are RPM/Deb/Conda packages which contain no (or few) files but have multiple dependencies. They are used to install many CUDA packages when you may not know the details of the packages you want. Below is the list of meta packages.

| Meta Package | Purpose |

|---|---|

| cuda | Installs all CUDA Toolkit and Driver packages. Handles upgrading to the next version of the cuda package when it’s released. |

| cuda- 11 — 4 | Installs all CUDA Toolkit and Driver packages. Remains at version 11.4 until an additional version of CUDA is installed. |

| cuda-toolkit- 11 — 4 | Installs all CUDA Toolkit packages required to develop CUDA applications. Does not include the driver. |

| cuda-tools- 11 — 4 | Installs all CUDA command line and visual tools. |

| cuda-runtime- 11 — 4 | Installs all CUDA Toolkit packages required to run CUDA applications, as well as the Driver packages. |

| cuda-compiler- 11 — 4 | Installs all CUDA compiler packages. |

| cuda-libraries- 11 — 4 | Installs all runtime CUDA Library packages. |

| cuda-libraries-dev- 11 — 4 | Installs all development CUDA Library packages. |

| cuda-drivers | Installs all Driver packages. Handles upgrading to the next version of the Driver packages when they’re released. |

4. Driver Installation

This section is for users who want to install a specific driver version.

For Debian and Ubuntu:

This allows you to get the highest version in the specified branch.

For Fedora and RHEL8:

- Example dkms streams: 450-dkms or latest-dkms

- Example precompiled streams: 450 or latest

To uninstall or change streams on Fedora and RHEL8:

5. Precompiled Streams

Packaging templates and instructions are provided on GitHub to allow you to maintain your own precompiled kernel module packages for custom kernels and derivative Linux distros:NVIDIA/yum-packaging-precompiled-kmod

To use the new driver packages on RHEL 8:

- First, ensure that the Red Hat repositories are enabled:

- Choose one of the four options below depending on the desired driver:

latest always updates to the highest versioned driver (precompiled):

locks the driver updates to the specified driver branch (precompiled):

latest-dkms always updates to the highest versioned driver (non-precompiled):

-dkms locks the driver updates to the specified driver branch (non-precompiled):

5.1. Precompiled Streams Support Matrix

| NVIDIA Driver | Precompiled Stream | Legacy DKMS Stream |

|---|---|---|

| Highest version | latest | latest-dkms |

| Locked at 455.x | 455 | 455-dkms |

| Locked at 450.x | 450 | 450-dkms |

| Locked at 440.x | 440 | 440-dkms |

| Locked at 418.x | 418 | 418-dkms |

5.2. Modularity Profiles

| Stream | Profile | Use Case |

|---|---|---|

| Default | /default | Installs all the driver packages in a stream. |

| Kickstart | /ks | Performs unattended Linux OS installation using a config file. |

| NVSwitch Fabric | /fm | Installs all the driver packages plus components required for bootstrapping an NVSwitch system (including the Fabric Manager and NSCQ telemetry). |

| Source | /src | Source headers for compilation (precompiled streams only). |

6. Kickstart Installation

6.1. RHEL8/CentOS8

- Enable the EPEL repository:

- Enable the CUDA repository:

- In the packages section of the ks.cfg file, make sure you are using the /ks profile and :latest-dkms stream:

- Perform the post-installation actions.

7. Runfile Installation

Basic instructions can be found in the Quick Start Guide. Read on for more detailed instructions.

This section describes the installation and configuration of CUDA when using the standalone installer. The standalone installer is a «.run» file and is completely self-contained.

7.1. Overview

The Runfile installation installs the NVIDIA Driver, CUDA Toolkit, and CUDA Samples via an interactive ncurses-based interface.

The installation steps are listed below. Distribution-specific instructions on disabling the Nouveau drivers as well as steps for verifying device node creation are also provided.

Finally, advanced options for the installer and uninstallation steps are detailed below.

The Runfile installation does not include support for cross-platform development. For cross-platform development, see the CUDA Cross-Platform Environment section.

7.2. Installation

Reboot into text mode (runlevel 3).

This can usually be accomplished by adding the number «3» to the end of the system’s kernel boot parameters.

Since the NVIDIA drivers are not yet installed, the text terminals may not display correctly. Temporarily adding «nomodeset» to the system’s kernel boot parameters may fix this issue.

Consult your system’s bootloader documentation for information on how to make the above boot parameter changes.

The reboot is required to completely unload the Nouveau drivers and prevent the graphical interface from loading. The CUDA driver cannot be installed while the Nouveau drivers are loaded or while the graphical interface is active.

Verify that the Nouveau drivers are not loaded. If the Nouveau drivers are still loaded, consult your distribution’s documentation to see if further steps are needed to disable Nouveau.

| Component | Default Installation Directory |

|---|---|

| CUDA Toolkit | /usr/local/cuda- 11.4 |

| CUDA Samples | $(HOME)/NVIDIA_CUDA- 11.4 _Samples |

The /usr/local/cuda symbolic link points to the location where the CUDA Toolkit was installed. This link allows projects to use the latest CUDA Toolkit without any configuration file update.

If installing the driver, the installer will also ask if the openGL libraries should be installed. If the GPU used for display is not an NVIDIA GPU, the NVIDIA openGL libraries should not be installed. Otherwise, the openGL libraries used by the graphics driver of the non-NVIDIA GPU will be overwritten and the GUI will not work. If performing a silent installation, the —no-opengl-libs option should be used to prevent the openGL libraries from being installed. See the Advanced Options section for more details.

If the GPU used for display is an NVIDIA GPU, the X server configuration file, /etc/X11/xorg.conf , may need to be modified. In some cases, nvidia-xconfig can be used to automatically generate a xorg.conf file that works for the system. For non-standard systems, such as those with more than one GPU, it is recommended to manually edit the xorg.conf file. Consult the xorg.conf documentation for more information.

Reboot the system to reload the graphical interface.

Verify the device nodes are created properly.

7.3. Disabling Nouveau

To install the Display Driver, the Nouveau drivers must first be disabled. Each distribution of Linux has a different method for disabling Nouveau.

7.3.1. Fedora

- Create a file at /usr/lib/modprobe.d/blacklist-nouveau.conf with the following contents:

- Regenerate the kernel initramfs:

- Run the below command:

- Reboot the system.

7.3.2. RHEL/CentOS

- Create a file at /etc/modprobe.d/blacklist-nouveau.conf with the following contents:

- Regenerate the kernel initramfs:

7.3.3. OpenSUSE

- Create a file at /etc/modprobe.d/blacklist-nouveau.conf with the following contents:

- Regenerate the kernel initrd:

7.3.4. SLES

No actions to disable Nouveau are required as Nouveau is not installed on SLES.

7.3.5. WSL

No actions to disable Nouveau are required as Nouveau is not installed on WSL.

7.3.6. Ubuntu

- Create a file at /etc/modprobe.d/blacklist-nouveau.conf with the following contents:

- Regenerate the kernel initramfs:

7.3.7. Debian

- Create a file at /etc/modprobe.d/blacklist-nouveau.conf with the following contents:

- Regenerate the kernel initramfs:

7.4. Device Node Verification

Check that the device files /dev/nvidia* exist and have the correct (0666) file permissions. These files are used by the CUDA Driver to communicate with the kernel-mode portion of the NVIDIA Driver. Applications that use the NVIDIA driver, such as a CUDA application or the X server (if any), will normally automatically create these files if they are missing using the nvidia-modprobe tool that is bundled with the NVIDIA Driver. However, some systems disallow setuid binaries, so if these files do not exist, you can create them manually by using a startup script such as the one below:

7.5. Advanced Options

| Action | Options Used | Explanation |

|---|---|---|

| Silent Installation | —silent | Required for any silent installation. Performs an installation with no further user-input and minimal command-line output based on the options provided below. Silent installations are useful for scripting the installation of CUDA. Using this option implies acceptance of the EULA. The following flags can be used to customize the actions taken during installation. At least one of —driver, —uninstall, —toolkit, and —samples must be passed if running with non-root permissions. |

| —driver | Install the CUDA Driver. | |

| —toolkit | Install the CUDA Toolkit. | |

| —toolkitpath= |

directory. If not provided, the default path of /usr/local/cuda- 11.4 is used.

directory. If not provided, the default path of $(HOME)/NVIDIA_CUDA- 11.4 _Samples is used.

directory. If the

is not provided, then the default path of your distribution is used. This only applies to the libraries installed outside of the CUDA Toolkit path.

Extracts to the

the following: the driver runfile, the raw files of the toolkit and samples to

This is especially useful when one wants to install the driver using one or more of the command-line options provided by the driver installer which are not exposed in this installer.

as the kernel source directory when building the NVIDIA kernel module. Required for systems where the kernel source is installed to a non-standard location.

instead of /tmp. Useful in cases where /tmp cannot be used (doesn’t exist, is full, is mounted with ‘noexec’, etc.).

7.6. Uninstallation

8. Conda Installation

8.1. Conda Overview

8.2. Installation

To perform a basic install of all CUDA Toolkit components using Conda, run the following command:

8.3. Uninstallation

To uninstall the CUDA Toolkit using Conda, run the following command:

9. Pip Wheels

NVIDIA provides Python Wheels for installing CUDA through pip, primarily for using CUDA with Python. These packages are intended for runtime use and do not currently include developer tools (these can be installed separately).

Please note that with this installation method, CUDA installation environment is managed via pip and additional care must be taken to set up your host environment to use CUDA outside the pip environment.

The following metapackages will install the latest version of the named component on Linux for the indicated CUDA version. «cu11» should be read as «cuda11».

- nvidia-cuda-runtime-cu11

- nvidia-cuda-cupti-cu11

- nvidia-cuda-nvcc-cu11

- nvidia-nvml-dev-cu11

- nvidia-cuda-nvrtc-cu11

- nvidia-nvtx-cu11

- nvidia-cuda-sanitizer-api-cu11

- nvidia-cublas-cu11

- nvidia-cufft-cu11

- nvidia-curand-cu11

- nvidia-cusolver-cu11

- nvidia-cusparse-cu11

- nvidia-npp-cu11

- nvidia-nvjpeg-cu11

These metapackages install the following packages:

- nvidia-nvml-dev-cu114

- nvidia-cuda-nvcc-cu114

- nvidia-cuda-runtime-cu114

- nvidia-cuda-cupti-cu114

- nvidia-cublas-cu114

- nvidia-cuda-sanitizer-api-cu114

- nvidia-nvtx-cu114

- nvidia-cuda-nvrtc-cu114

- nvidia-npp-cu114

- nvidia-cusparse-cu114

- nvidia-cusolver-cu114

- nvidia-curand-cu114

- nvidia-cufft-cu114

- nvidia-nvjpeg-cu114

10. Tarball and Zip Archive Deliverables

These .tar.xz and .zip archives do not replace existing packages such as .deb, .rpm, runfile, conda, etc. and are not meant for general consumption, as they are not installers. However this standardized approach will replace existing .txz archives.

For each release, a JSON manifest is provided such as redistrib_11.4.2.json, which corresponds to the CUDA 11.4.2 release label (CUDA 11.4 update 2) which includes the release date, the name of each component, license name, relative URL for each platform and checksums.

Package maintainers are advised to check the provided LICENSE for each component prior to redistribution. Instructions for developers using CMake and Bazel build systems are provided in the next sections.

10.1. Parsing Redistrib JSON

10.2. Importing into CMake

The recommended module for importing these tarballs into the CMake build system is via FindCUDAToolkit (3.17 and newer).

The path to the extraction location can be specified with the CUDAToolkit_ROOT environmental variable.

For older versions of CMake, the ExternalProject_Add module is an alternative method.

Example CMakeLists.txt file:

10.3. Importing into Bazel

The recommended method of importing these tarballs into the Bazel build system is using http_archive and pkg_tar.

11. CUDA Cross-Platform Environment

Cross-platform development is only supported on Ubuntu systems, and is only provided via the Package Manager installation process.

We recommend selecting Ubuntu 18.04 as your cross-platform development environment. This selection helps prevent host/target incompatibilities, such as GCC or GLIBC version mismatches.

11.1. CUDA Cross-Platform Installation

Some of the following steps may have already been performed as part of the native Ubuntu installation. Such steps can safely be skipped.

These steps should be performed on the x86_64 host system, rather than the target system. To install the native CUDA Toolkit on the target system, refer to the native Ubuntu installation section.

- Perform the pre-installation actions.

- Install repository meta-data package with:

where indicates the operating system, architecture, and/or the version of the package.

Update the Apt repository cache:

11.2. CUDA Cross-Platform Samples

This section describes the options used to build cross-platform samples. TARGET_ARCH= and TARGET_OS= should be chosen based on the supported targets shown below. TARGET_FS=

can be used to point nvcc to libraries and headers used by the sample.

| TARGET OS | ||||

| linux | android | qnx | ||

| TARGET ARCH | x86_64 | YES | NO | NO |

| aarch64 | YES | YES | YES | |

| sbsa | YES | NO | NO | |

TARGET_ARCH

TARGET_OS

TARGET_FS

Cross Compiling to Embedded ARM architectures

Copying Libraries

12. Post-installation Actions

The post-installation actions must be manually performed. These actions are split into mandatory, recommended, and optional sections.

12.1. Mandatory Actions

Some actions must be taken after the installation before the CUDA Toolkit and Driver can be used.

12.1.1. Environment Setup

The PATH variable needs to include $ export PATH=/usr/local/cuda- 11.4 /bin$

To add this path to the PATH variable:

In addition, when using the runfile installation method, the LD_LIBRARY_PATH variable needs to contain /usr/local/cuda- 11.4 /lib64 on a 64-bit system, or /usr/local/cuda- 11.4 /lib on a 32-bit system

To change the environment variables for 64-bit operating systems:

To change the environment variables for 32-bit operating systems:

Note that the above paths change when using a custom install path with the runfile installation method.

12.1.2. POWER9 Setup

Because of the addition of new features specific to the NVIDIA POWER9 CUDA driver, there are some additional setup requirements in order for the driver to function properly. These additional steps are not handled by the installation of CUDA packages, and failure to ensure these extra requirements are met will result in a non-functional CUDA driver installation.

Disable a udev rule installed by default in some Linux distributions that cause hot-pluggable memory to be automatically onlined when it is physically probed. This behavior prevents NVIDIA software from bringing NVIDIA device memory online with non-default settings. This udev rule must be disabled in order for the NVIDIA CUDA driver to function properly on POWER9 systems.

You will need to reboot the system to initialize the above changes.

12.2. Recommended Actions

Other actions are recommended to verify the integrity of the installation.

12.2.1. Install Persistence Daemon

NVIDIA is providing a user-space daemon on Linux to support persistence of driver state across CUDA job runs. The daemon approach provides a more elegant and robust solution to this problem than persistence mode. For more details on the NVIDIA Persistence Daemon, see the documentation here.

12.2.2. Install Writable Samples

12.2.3. Verify the Installation

Before continuing, it is important to verify that the CUDA toolkit can find and communicate correctly with the CUDA-capable hardware. To do this, you need to compile and run some of the included sample programs.

12.2.3.1. Verify the Driver Version

If you installed the driver, verify that the correct version of it is loaded. If you did not install the driver, or are using an operating system where the driver is not loaded via a kernel module, such as L4T, skip this step.

12.2.3.2. Compiling the Examples

The version of the CUDA Toolkit can be checked by running nvcc -V in a terminal window. The nvcc command runs the compiler driver that compiles CUDA programs. It calls the gcc compiler for C code and the NVIDIA PTX compiler for the CUDA code.

The NVIDIA CUDA Toolkit includes sample programs in source form. You should compile them by changing to

/NVIDIA_CUDA- 11.4 _Samples and typing make . The resulting binaries will be placed under

/NVIDIA_CUDA- 11.4 _Samples/bin .

12.2.3.3. Running the Binaries

After compilation, find and run deviceQuery under

/NVIDIA_CUDA- 11.4 _Samples . If the CUDA software is installed and configured correctly, the output for deviceQuery should look similar to that shown in Figure 1.

The exact appearance and the output lines might be different on your system. The important outcomes are that a device was found (the first highlighted line), that the device matches the one on your system (the second highlighted line), and that the test passed (the final highlighted line).

If a CUDA-capable device and the CUDA Driver are installed but deviceQuery reports that no CUDA-capable devices are present, this likely means that the /dev/nvidia* files are missing or have the wrong permissions.

Running the bandwidthTest program ensures that the system and the CUDA-capable device are able to communicate correctly. Its output is shown in Figure 2.

Note that the measurements for your CUDA-capable device description will vary from system to system. The important point is that you obtain measurements, and that the second-to-last line (in Figure 2) confirms that all necessary tests passed.

Should the tests not pass, make sure you have a CUDA-capable NVIDIA GPU on your system and make sure it is properly installed.

If you run into difficulties with the link step (such as libraries not being found), consult the Linux Release Notes found in the doc folder in the CUDA Samples directory.

12.2.4. Install Nsight Eclipse Plugins

12.3. Optional Actions

Other options are not necessary to use the CUDA Toolkit, but are available to provide additional features.

12.3.1. Install Third-party Libraries

Some CUDA samples use third-party libraries which may not be installed by default on your system. These samples attempt to detect any required libraries when building.

If a library is not detected, it waives itself and warns you which library is missing. To build and run these samples, you must install the missing libraries. These dependencies may be installed if the RPM or Deb cuda-samples- 11 — 4 package is used. In cases where these dependencies are not installed, follow the instructions below.

RHEL7/CentOS7

RHEL8/CentOS8

Fedora

OpenSUSE

Ubuntu

Debian

12.3.2. Install the Source Code for cuda-gdb

The cuda-gdb source must be explicitly selected for installation with the runfile installation method. During the installation, in the component selection page, expand the component «CUDA Tools 11.0» and select cuda-gdb-src for installation. It is unchecked by default.

To obtain a copy of the source code for cuda-gdb using the RPM and Debian installation methods, the cuda-gdb-src package must be installed.

The source code is installed as a tarball in the /usr/local/cuda- 11.4 /extras directory.

12.3.3. Select the Active Version of CUDA

For applications that rely on the symlinks /usr/local/cuda and /usr/local/cuda-MAJOR , you may wish to change to a different installed version of CUDA using the provided alternatives.

To show the active version of CUDA and all available versions:

To show the active minor version of a given major CUDA release:

To update the active version of CUDA:

13. Advanced Setup

Below is information on some advanced setup scenarios which are not covered in the basic instructions above.

| Scenario | Instructions |

|---|---|

| Install CUDA using the Package Manager installation method without installing the NVIDIA GL libraries. | Fedora |

Install CUDA using the following command:

Follow the instructions here to ensure that Nouveau is disabled.

If performing an upgrade over a previous installation, the NVIDIA kernel module may need to be rebuilt by following the instructions here.

On some system configurations the NVIDIA GL libraries may need to be locked before installation using:

Install CUDA using the following command:

Follow the instructions here to ensure that Nouveau is disabled.

This functionality isn’t supported on Ubuntu. Instead, the driver packages integrate with the Bumblebee framework to provide a solution for users who wish to control what applications the NVIDIA drivers are used for. See Ubuntu’s Bumblebee wiki for more information.

Remove diagnostic packages using the following command:

Follow the instructions here to continue installation as normal.

Remove diagnostic packages using the following command:

Follow the instructions here to continue installation as normal.

Remove diagnostic packages using the following command:

Follow the instructions here to continue installation as normal.

Remove diagnostic packages using the following command:

Follow the instructions here to continue installation as normal.

The Bus ID will resemble «PCI:00:02.0» and can be found by running lspci .

The RPM packages don’t support custom install locations through the package managers (Yum and Zypper), but it is possible to install the RPM packages to a custom location using rpm’s —relocate parameter:

You will need to install the packages in the correct dependency order; this task is normally taken care of by the package managers. For example, if package «foo» has a dependency on package «bar», you should install package «bar» first, and package «foo» second. You can check the dependencies of a RPM package as follows:

Note that the driver packages cannot be relocated.

The Deb packages do not support custom install locations. It is however possible to extract the contents of the Deb packages and move the files to the desired install location. See the next scenario for more details one xtracting Deb packages.

The Runfile can be extracted into the standalone Toolkit, Samples and Driver Runfiles by using the —extract parameter. The Toolkit and Samples standalone Runfiles can be further extracted by running:

The Driver Runfile can be extracted by running:

The RPM packages can be extracted by running:

The Deb packages can be extracted by running:

Modify Ubuntu’s apt package manager to query specific architectures for specific repositories.

This is useful when a foreign architecture has been added, causing «404 Not Found» errors to appear when the repository meta-data is updated.

Each repository you wish to restrict to specific architectures must have its sources.list entry modified. This is done by modifying the /etc/apt/sources.list file and any files containing repositories you wish to restrict under the /etc/apt/sources.list.d/ directory. Normally, it is sufficient to modify only the entries in /etc/apt/sources.list

For more details, see the sources.list manpage.

The nvidia.ko kernel module fails to load, saying some symbols are unknown.

Check to see if there are any optionally installable modules that might provide these symbols which are not currently installed.

For the example of the drm_open symbol, check to see if there are any packages which provide drm_open and are not already installed. For instance, on Ubuntu 14.04, the linux-image-extra package provides the DRM kernel module (which provides drm_open). This package is optional even though the kernel headers reflect the availability of DRM regardless of whether this package is installed or not.

The runfile installer fails to extract due to limited space in the TMP directory.

This can occur on systems with limited storage in the TMP directory (usually /tmp), or on systems which use a tmpfs in memory to handle temporary storage. In this case, the —tmpdir command-line option should be used to instruct the runfile to use a directory with sufficient space to extract into. More information on this option can be found here.

Re-enable Wayland after installing the RPM driver on Fedora.

This can occur when installing CUDA after uninstalling a different version. Use the following command before installation:

14. Frequently Asked Questions

How do I install the Toolkit in a different location?

The RPM and Deb packages cannot be installed to a custom install location directly using the package managers. See the «Install CUDA to a specific directory using the Package Manager installation method» scenario in the Advanced Setup section for more information.

Why do I see «nvcc: No such file or directory» when I try to build a CUDA application?

Why do I see «error while loading shared libraries:

: cannot open shared object file: No such file or directory» when I try to run a CUDA application that uses a CUDA library?

Why do I see multiple «404 Not Found» errors when updating my repository meta-data on Ubuntu?

These errors occur after adding a foreign architecture because apt is attempting to query for each architecture within each repository listed in the system’s sources.list file. Repositories that do not host packages for the newly added architecture will present this error. While noisy, the error itself does no harm. Please see the Advanced Setup section for details on how to modify your sources.list file to prevent these errors.

How can I tell X to ignore a GPU for compute-only use?

To make sure X doesn’t use a certain GPU for display, you need to specify which other GPU to use for display. For more information, please refer to the «Use a specific GPU for rendering the display» scenario in the Advanced Setup section.

Why doesn’t the cuda-repo package install the CUDA Toolkit and Drivers?

When using RPM or Deb, the downloaded package is a repository package. Such a package only informs the package manager where to find the actual installation packages, but will not install them.

See the Package Manager Installation section for more details.

How do I get CUDA to work on a laptop with an iGPU and a dGPU running Ubuntu14.04?

What do I do if the display does not load, or CUDA does not work, after performing a system update?

System updates may include an updated Linux kernel. In many cases, a new Linux kernel will be installed without properly updating the required Linux kernel headers and development packages. To ensure the CUDA driver continues to work when performing a system update, rerun the commands in the Kernel Headers and Development Packages section.

How do I install a CUDA driver with a version less than 367 using a network repo?

To install a CUDA driver at a version earlier than 367 using a network repo, the required packages will need to be explicitly installed at the desired version. For example, to install 352.99, instead of installing the cuda-drivers metapackage at version 352.99, you will need to install all required packages of cuda-drivers at version 352.99.

How do I install an older CUDA version using a network repo?

Depending on your system configuration, you may not be able to install old versions of CUDA using the cuda metapackage. In order to install a specific version of CUDA, you may need to specify all of the packages that would normally be installed by the cuda metapackage at the version you want to install.

If you are using yum to install certain packages at an older version, the dependencies may not resolve as expected. In this case you may need to pass » —setopt=obsoletes=0 » to yum to allow an install of packages which are obsoleted at a later version than you are trying to install.

Why does the installation on SUSE install the Mesa-dri-nouveau dependency?

15. Additional Considerations

Now that you have CUDA-capable hardware and the NVIDIA CUDA Toolkit installed, you can examine and enjoy the numerous included programs. To begin using CUDA to accelerate the performance of your own applications, consult the CUDA C++ Programming Guide , located in /usr/local/cuda- 11.4 /doc .

A number of helpful development tools are included in the CUDA Toolkit to assist you as you develop your CUDA programs, such as NVIDIA В® Nsightв„ў Eclipse Edition, NVIDIA Visual Profiler, CUDA-GDB, and CUDA-MEMCHECK.

For technical support on programming questions, consult and participate in the developer forums at https://developer.nvidia.com/cuda/.

16. Removing CUDA Toolkit and Driver

Follow the below steps to properly uninstall the CUDA Toolkit and NVIDIA Drivers from your system. These steps will ensure that the uninstallation will be clean.

RHEL8/CentOS8

RHEL7/CentOS7

Fedora

OpenSUSE/SLES

Ubuntu and Debian

Notices

Notice

This document is provided for information purposes only and shall not be regarded as a warranty of a certain functionality, condition, or quality of a product. NVIDIA Corporation (“NVIDIA”) makes no representations or warranties, expressed or implied, as to the accuracy or completeness of the information contained in this document and assumes no responsibility for any errors contained herein. NVIDIA shall have no liability for the consequences or use of such information or for any infringement of patents or other rights of third parties that may result from its use. This document is not a commitment to develop, release, or deliver any Material (defined below), code, or functionality.

NVIDIA reserves the right to make corrections, modifications, enhancements, improvements, and any other changes to this document, at any time without notice.

Customer should obtain the latest relevant information before placing orders and should verify that such information is current and complete.

NVIDIA products are sold subject to the NVIDIA standard terms and conditions of sale supplied at the time of order acknowledgement, unless otherwise agreed in an individual sales agreement signed by authorized representatives of NVIDIA and customer (“Terms of Sale”). NVIDIA hereby expressly objects to applying any customer general terms and conditions with regards to the purchase of the NVIDIA product referenced in this document. No contractual obligations are formed either directly or indirectly by this document.

NVIDIA products are not designed, authorized, or warranted to be suitable for use in medical, military, aircraft, space, or life support equipment, nor in applications where failure or malfunction of the NVIDIA product can reasonably be expected to result in personal injury, death, or property or environmental damage. NVIDIA accepts no liability for inclusion and/or use of NVIDIA products in such equipment or applications and therefore such inclusion and/or use is at customer’s own risk.

NVIDIA makes no representation or warranty that products based on this document will be suitable for any specified use. Testing of all parameters of each product is not necessarily performed by NVIDIA. It is customer’s sole responsibility to evaluate and determine the applicability of any information contained in this document, ensure the product is suitable and fit for the application planned by customer, and perform the necessary testing for the application in order to avoid a default of the application or the product. Weaknesses in customer’s product designs may affect the quality and reliability of the NVIDIA product and may result in additional or different conditions and/or requirements beyond those contained in this document. NVIDIA accepts no liability related to any default, damage, costs, or problem which may be based on or attributable to: (i) the use of the NVIDIA product in any manner that is contrary to this document or (ii) customer product designs.

No license, either expressed or implied, is granted under any NVIDIA patent right, copyright, or other NVIDIA intellectual property right under this document. Information published by NVIDIA regarding third-party products or services does not constitute a license from NVIDIA to use such products or services or a warranty or endorsement thereof. Use of such information may require a license from a third party under the patents or other intellectual property rights of the third party, or a license from NVIDIA under the patents or other intellectual property rights of NVIDIA.

Reproduction of information in this document is permissible only if approved in advance by NVIDIA in writing, reproduced without alteration and in full compliance with all applicable export laws and regulations, and accompanied by all associated conditions, limitations, and notices.

THIS DOCUMENT AND ALL NVIDIA DESIGN SPECIFICATIONS, REFERENCE BOARDS, FILES, DRAWINGS, DIAGNOSTICS, LISTS, AND OTHER DOCUMENTS (TOGETHER AND SEPARATELY, “MATERIALS”) ARE BEING PROVIDED “AS IS.” NVIDIA MAKES NO WARRANTIES, EXPRESSED, IMPLIED, STATUTORY, OR OTHERWISE WITH RESPECT TO THE MATERIALS, AND EXPRESSLY DISCLAIMS ALL IMPLIED WARRANTIES OF NONINFRINGEMENT, MERCHANTABILITY, AND FITNESS FOR A PARTICULAR PURPOSE. TO THE EXTENT NOT PROHIBITED BY LAW, IN NO EVENT WILL NVIDIA BE LIABLE FOR ANY DAMAGES, INCLUDING WITHOUT LIMITATION ANY DIRECT, INDIRECT, SPECIAL, INCIDENTAL, PUNITIVE, OR CONSEQUENTIAL DAMAGES, HOWEVER CAUSED AND REGARDLESS OF THE THEORY OF LIABILITY, ARISING OUT OF ANY USE OF THIS DOCUMENT, EVEN IF NVIDIA HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. Notwithstanding any damages that customer might incur for any reason whatsoever, NVIDIA’s aggregate and cumulative liability towards customer for the products described herein shall be limited in accordance with the Terms of Sale for the product.

Источник