- NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- Re: NBD (Network Block Devices) в качестве SAN

- linux NBD: Introduction to Linux Network Block Devices

- Getting started with NBD

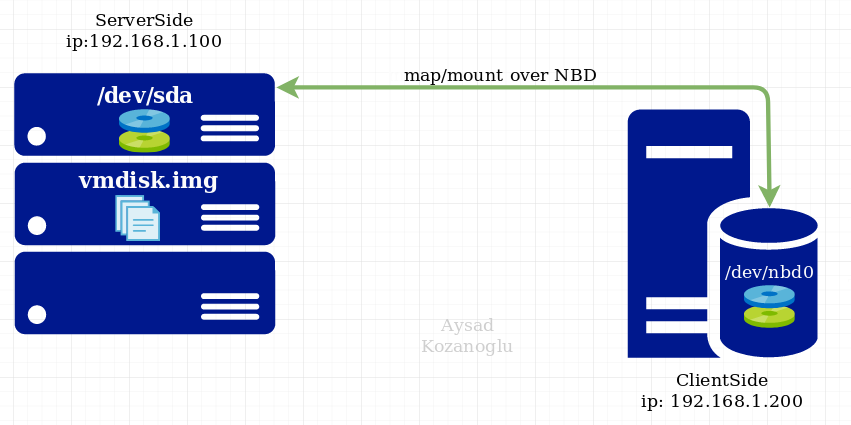

- ServerSide

- ClientSide

- Important

- Network Block Device

- Introduction

- Getting the source

- Contributing

- Support

- Security issues

- Links

- NBD on other platforms

- Linux NBD Tutorial: Network Block Device Jumpstart Guide

- I. NBD Server Side Configuration Steps

- 1. Install nbd-server

- 2. Create a file content

- 3. Start the NBD Server Daemon

- II. NBD Client Side Configuration Steps

- 1. Install nbd-client

- 2. Using nbd-client create a filesystem on client machine

- III. Mount the File System on Client-side

- IV. Get Client Changes on Server-side

- V. Access Remote Storage as Local Swap Memory Area

- Configuration On Server side:

- 1. Create a file

- 2. Instead of create a file in ext2 filesystem create it as swap file, using mkswap

- 3. Run the server daemon

- Configuration On Client side:

- 1. Get the filesystem as swap area

- 2. Cross check using “cat /proc/swaps “. This will list the swap areas

- If you enjoyed this article, you might also like..

NBD (Network Block Devices) в качестве SAN

Есть такая хорошая штука как nbd.

Если расшареный nbd винт использовать с одного клиента, то никаких вопросов не возникает.

Но у меня вот такой вопрос: Возможно-ли при подключении nbd винта на разные клиенты использовать его на всех для записи/чтения, например, поставив на него файловую систему GFS?

И попутный вопрос: Если это возможно, будет-ли такое работать если поставить GFS на lvm раздел организованный на нескольких nbd?

Re: NBD (Network Block Devices) в качестве SAN

Нет.

Да, но зачем тогда nbd?

Да, но блин усточивость внушает сомнения

Re: NBD (Network Block Devices) в качестве SAN

> Да, но зачем тогда nbd?

Не совсем понял. nbd будет раздавать винты клиентам. А GFS будет обеспечивать корректную работу такой конфигурации. Прощще всего конечно использовать все nbd на одном сервере и раздавать файлы на нем по nfs, но в данном случае возникает двойной сетевой трафик.

> Да, но блин усточивость внушает сомнения

Устойчивость чего вызывает сомнение? nbd+lvm у меня уже используется, но только в монопольном режиме без раздачи по нескольким клиентам.

Re: NBD (Network Block Devices) в качестве SAN

>Не совсем понял. nbd будет раздавать винты клиентам. А GFS будет обеспечивать корректную работу такой конфигурации. Прощще всего конечно использовать все nbd на одном сервере и раздавать файлы на нем по nfs, но в данном случае возникает двойной сетевой трафик.

Так GFS же может своими средствами преоставлять ФС клиентам. Или storage у тебя принципиально не должен сам GFS иметь?

>Устойчивость чего вызывает сомнение? nbd+lvm у меня уже используется, но только в монопольном режиме без раздачи по нескольким клиентам.

Чего угодно поверх nbd. он сам по себе стремен, но не дай бог еще уровень абстракции поверх — в случае сбоя кто собирать будет кости?

Re: NBD (Network Block Devices) в качестве SAN

> Так GFS же может своими средствами преоставлять ФС клиентам. Или storage у тебя принципиально не должен сам GFS иметь?

Хм. Когда я ее изучал, я у нее таких возможностей не заметил.

Вот ситуация. У меня в локалке есть куча серверов с винтами и мне нужно получить единое хранилище состоящие из этих винтов. Это средствами GFS делается?

Re: NBD (Network Block Devices) в качестве SAN

Неважно, кто будет раздавать блочное устройство по сети — хоть NBD, хоть ENBD, хоть iSCSI. Главное, чтобы этот протокол был рассчитан на одновременное использование (не делал лишнего кеширования и отложенной записи).

Но NBD пользоваться сильно не рекомендуется — оно очень плохо отрабатывает сетевые проблемы. Как минимум ENBD, а лучше всё-таки iSCSI попробовать.

Сверху такого блочного устройства, насколько я понимаю, может быть, по сути, любая кластерная файловая система — GFS, OCFS (лучше OCFS2), ещё были каких-то парочка.

Я точно знаю примеры использования iSCSI+OCFS2, они подробно описаны на околооракловых ресурсах.

А вообще это уже не SAN, это NAS. 🙂

Ну естественно, что для очень уж серьёзных применений такую конструкцию использовать нельзя — латентность блочных сетевых устройств на порядок, а то и на два выше, чем у обычных SCSI или FiberChannel-устройств (зато цена на порядок, а то и на два — ниже).

Re: NBD (Network Block Devices) в качестве SAN

С iscsi я задолбался бороться. 🙁 Пока enbd мне представляется наиболее реальным вариантом.

Re: NBD (Network Block Devices) в качестве SAN

> Возможно-ли при подключении nbd винта на разные клиенты

> использовать его на всех для записи/чтения, например, поставив на

> него файловую систему GFS?

Может и опоздал но ответ будет ДА. В GFS для nbd есть даже реализация fenced.

Только рекомендуется использовать не nbd а другую реализацию, читай

http://www.redhat.com/docs/manuals/csgfs/browse/rh-cs-en/index.html

Re: NBD (Network Block Devices) в качестве SAN

Re: NBD (Network Block Devices) в качестве SAN

Вы исполььзуете iscsi target железный или софтовый? В данном варианте меня больше всего интересует нагрузка на проц. Что-то мне подсказывает что без спец. железа она будет очень высокой.

Re: NBD (Network Block Devices) в качестве SAN

Попробовал enbd скомпилить под свое ядро. При загрузке модуля kernel panic. Нафиг такую надежность!

Re: NBD (Network Block Devices) в качестве SAN

Сейчас тестирую поведение nbd девайсов при обрыве связи. На периодах в несколько минут операции на клиенте с этим разделом замирают. При этом по top видно i/o waiting под 100%. Когда связь восстанавливается, через небольшое время все отмирает и корректно продолжает работать дальше. Если время побольше — тогда да. С lvm происходит сбой и фс монтируется только для чтения. Мне кажеться что это не настолько смертельно что-бы вообще отказываться от nbd.

Re: NBD (Network Block Devices) в качестве SAN

Использую две ISCSI QLogiq 4010c, до этого экпериментировал на софтовом.

По глючности разница как небо и земля 🙂

Re: NBD (Network Block Devices) в качестве SAN

Про нагрузку: тормозов на софтовой реализации не замечал,

процессор стандартный P4 3.00GHz с кешем 2Мб.

Основная бага, при выдергивании шнурка выпадал в кернел паник.

Re: NBD (Network Block Devices) в качестве SAN

тебе нужен gnbd или iscsi. на остальное забей ибо глючность и кривость обеспечены и гарантируются производителем

Источник

linux NBD: Introduction to Linux Network Block Devices

Sep 16, 2019 · 3 min read

Network block devices (NBD) are used to access remote storage device that does not physically reside in the local machine. Using Network Block Device, we can access and use the remote storage devices in following three ways on the local machine:

NBD presents a remote resource as local resource to the client. Also, NBD driver makes a remote resource look like a local device in Linux, allowing a cheap and safe real-time mirror to be constructed.

you dont need to com p are NFS with NBD because both are totally different ways of solutions of network storage system

Why NBD ? usecase scenario

For example, maybe you want to format the device. Or you want to modify or copy entire partitions. These tasks would be impossible to accomplish with a network file system, because they would require you to have the file system unmounted in order to perform them — and if you unmount your network file system, it’s no longer connected.

But if your remote storage device is mounted as a block device (NBD), you can do anything to it that you’d be able to do to a local block device.

In other words, with NBD, you can take a device like /dev/sda on one machine and make it available to another machine as if it were a local device there connected via a SCSI or SATA cable, even though in actuality it is connected over the network.

Sometimes you may want to complete operations on a storage device at a lower level than a network file system(NFS) would support.

You can also boot complete OS from NBD over network.(e.g. scaleway.com users boots everytime from nbd)

Getting started with NBD

I used debian OS for my example below (would works also with all derivates like ubuntu)

NBD works according to a client/server architecture. You use the server to make a volume available as a network block device from a host, then run the client to connect to it from another host.

ServerSide

apt-get install nbd-server

modprobe nbd

after installation you can begin to export a device or file now

Export device

Example: export ServerSide Disk Device like /dev/sda on port 9999

Export img file

Example: export ServceSide .img file like vmdisk.img on port 9998

img over NDB can be useful if you’re working with virtual disk images, for example.

ClientSide

On the client machine that we want to use to connect to the NBD export we just created, we first need to install the NBD client package with:

apt-get install nbd-client

modprobe nbd-client

map/mount remote NBD exported device as local device /dev/nbd0

nbd-client 192.168.1.100 9999 /dev/nbd0

nbd-client 192.168.1.100 9998 /dev/nbd1

What you can do now on ClientSide with mounted NBD?

you can start doing cool things with the NBD export from the client machine by using /dev/nbd0 as the target.

now you are able to use /dev/nbd0 like local disk on clientSide

example: you can format it

mkfs.ext4 /dev/dbd0 )

other usage examples szenarios clientSide

+ You could resize partitions

+ You could create a filesystem (like local filesystem)

+ You could create btrfs /zfs/glusterfs storage pools

As long as the export that the client is using at /dev/nbd0 is mapped to a device like /dev/sda on the server, operations from the client on /dev/nbd0 will take effect on the server just as they would if you were running them locally on the server with /dev/sda as the target.

Important

use this case szenario only in protected Local Networks !

Never use NBD in Public networks (e.g over internet) without configure your security. (the same rules aplies NFS too ! )

All of the above said, NBD is a cool tool. It lets you do things that would otherwise not be possible. It doesn’t get much press these days (which is not surprising because NBD dates all the way back to the early 2000s, and hasn’t ever been important commercially), but it may be just the tool you need to solve some of the strange challenges that can arise in the life of a sysadmin.

Источник

Network Block Device

Introduction

What is it: With this compiled into your kernel, Linux can use a remote server as one of its block devices. Every time the client computer wants to read /dev/nbd0, it will send a request to the server via TCP, which will reply with the data requested. This can be used for stations with low disk space (or even diskless — if you use an initrd) to borrow disk space from other computers. Unlike NFS, it is possible to put any file system on it. But (also unlike NFS), if someone has mounted NBD read/write, you must assure that no one else will have it mounted.

Current state: It currently works. Network block device is pretty stable. It was originaly thought that it is impossible to swap over TCP; this turned out not to be true. However, to avoid deadlocks, you will need at least Linux 3.6.

It is possible to use NBD as the block device counterpart of FUSE, to implement the block device’s reads and writes in user space. To make this easer, recent versions of NBD (3.10 and above) implement NBD over a Unix Domain Socket, too.

If you’re interested in the technical side of how NBD works, please see the doc/ directory in the source code, especially the protocol documentation.

Comments and help about the tools is always appreciated; if you’re willing to help out, please subscribe to the mailinglist, and share your thoughts.

Getting the source

Contributing

If you wish to contribute, you may join us on the mailinglist. We do accept pull requests for minor bug fixes, but if you want to make large changes, prior discussion on the mailinglist is recommended.

Support

If want to discuss bugs in the nbd userland utilities or wish to ask a question on how to use it, use the mailinglist. For a more structured method of filing problem reports, the GitHub issue tracker can be used.

Security issues

If you think you found a security problem with NBD, please contact the mailinglist. Do not just file an issue for this (although you may do so also if you prefer).

Links

- Sourceforge project pages

- In September 2006, Linux Magazine featured an article about network block devices (not just NBD, but other implementations as well). This is available for download from their website.

- Another writeup was done by that other Linux Magazine in October 2008. It’s available straight from their website.

NBD on other platforms

Besides Linux, the authors have knowledge of NBD client support on the following platforms:

- The Hurd: an nbd translator was written by Rolan McGrath on September 28th, 2001

- Plan 9: Christoph Lohmann informed us on June 1st, 2006, that it can mount NBD exports with some extra code

- FreeBSD: a GEOM Gate device driver and client is available on github.

- Windows: since 2020, the Ceph for Windows installer includes a full NBD client implementation for Windows. This implementation is written for, and intended mostly to be used to allow Windows to access Rados Block Devices (RBDs) on Ceph, but should be compatible with other NBD implementations and can be used separately, if desired.

The server should theoretically work on every POSIX-compliant platform out there (provided glib is supported on that platform). If it doesn’t, that’s a bug and I want to know about it; if you encounter such problems, please send a mail to the mailinglist.

Источник

Linux NBD Tutorial: Network Block Device Jumpstart Guide

Network block devices are used to access remote storage device that does not physically reside in the local machine. Using Network Block Device, we can access and use the remote storage devices in following three ways on the local machine:

NBD presents a remote resource as local resource to the client. Also, NBD driver makes a remote resource look like a local device in Linux, allowing a cheap and safe real-time mirror to be constructed.

You can also use remote machine storage area as local machine swap area using NBD.

To setup the NBD based file system, we need a nbd-server (on remote machine, where we like to access/create the content) and nbd-client (on local machine, where we like to access the remote storage device locally).

I. NBD Server Side Configuration Steps

1. Install nbd-server

If you working on Debian flavor, get the nbd-server through apt-get.

2. Create a file content

Create a file using dd as shown below.

Use mke2fs to make the /mnt/dhini as a filesystem.

When you try to make /mnt/dhini as ext2 file system, you may get a warning message as show below. Press y to continue.

3. Start the NBD Server Daemon

You can also run the nbd-server on multiple ports as shown below.

You can also specify the timeout to make the server to run N idle seconds

II. NBD Client Side Configuration Steps

Perform the following steps in the client machine, where you like to access the remote storage device.

1. Install nbd-client

If you working on debian flavor, get the nbd-client through apt-get.

2. Using nbd-client create a filesystem on client machine

Once it gets to 100%, you will get the block device on your local macine on the same path.

If you face any issues during the NBD configuration process, you may also configure the nbd-server and nbd-client through dpkg-reconfigure.

III. Mount the File System on Client-side

Once mounted, you may get the directory with “lost+found”. You can start accessing the files and directories properly from this point.

IV. Get Client Changes on Server-side

Mount the nbd filesystem locally

If you are not using “-o loop” option, you may get the following error:

When you list the /client_changes, You will get all the client created files and directories properly.

V. Access Remote Storage as Local Swap Memory Area

Configuration On Server side:

1. Create a file

2. Instead of create a file in ext2 filesystem create it as swap file, using mkswap

3. Run the server daemon

Configuration On Client side:

1. Get the filesystem as swap area

2. Cross check using “cat /proc/swaps “. This will list the swap areas

This article was written by Dhineshkumar Manikannan. He is working in bk Systems (p) Ltd, and interested in contributing to the open source. The Geek Stuff welcomes your tips and guest articles

If you enjoyed this article, you might also like..

|

|

|  |  |  |

> # nbd-server 1043 /mnt/dhini

Server uses port 1043

> # nbd-client 192.168.1.11 1077 -swap /mnt/remote

but client 1077?

And I would say the mounted swap partition is /mnt/remote and not /mnt/dhini

> /mnt/dhini partition 15992 0 -4

Plus you forget to mention that using swap over a network is a theoretical possibility but hardly recommendable for practical situations.

(1) you’ve got /mnt/remote in the nbd-client command, but the output says /mnt/dhini. Typo?

(2) conventionally, /mnt/whatever are used as the mount points (the right side of a mount command), not as loop devices (left side). That’s only a convention but common enough that newbies who see your post *may* get a little confused by your use of /mnt/dhini etc on the *left* side

Thanks for pointing out the issues. I’ve updated the following.

1. The port on the nbd-client should be 1043

2. the mount point should be /mnt/dhini

Hi Ramesh, before III. Mount the File System on Client-side section, you wrote dbkg-reconfigure not which is not correct, dpkg-reconfigure is more corrcectly

Thanks for pointing out the typo. It is fixed now.

Way use nbd over iscsi ?

I still like NFS for shares across multiple clients.

Swap over a network ?

Generally a bad idea ; but cloud computing uses it .

Источник