- Thread: Forcing an IGMP join

- Forcing an IGMP join

- Re: Forcing an IGMP join

- Re: Forcing an IGMP join

- Re: Forcing an IGMP join

- Re: Forcing an IGMP join

- Re: Forcing an IGMP join

- Net-Labs.in

- Network, ISP

- Маршрутизация multicast в Linux

- Статическая маршрутизация multicast

- Ренамберинг мультикаст групп

- Прочее

- Protocol Independent Multicast — PIM

- PIM Overview

- PIM Neighbors

- Configure PIM

- PIM Sparse Mode (PIM-SM)

- Any-source Multicast Routing (ASM)

- Receiver Joins First

- Sender Starts Before Receivers Join

- PIM Null-Register

- Source Specific Multicast Mode (SSM)

- Receiver Joins First

- Sender Starts Before Receivers Join

- Differences between Source Specific Multicast and Any Source Multicast

- PIM Active-Active with MLAG

- Multicast Sender

- Multicast Receiver

- Verify PIM

- Additional PIM Features

- Custom SSM multicast group ranges

- PIM and ECMP

- IP Multicast Boundaries

- Multicast Source Discovery Protocol (MSDP)

- PIM in a VRF

- BFD for PIM Neighbors

- Troubleshooting

- FHR Stuck in Registering Process

- No *,G Is Built on LHR

- No mroute Created on FHR

- No S,G on RP for an Active Group

- No mroute Entry Present in Hardware

- Verify MSDP Session State

- View the Active Sources

Thread: Forcing an IGMP join

Thread Tools

Display

Forcing an IGMP join

Is there a command-line means to get a Linux/Ubuntu client PC to send an IGMP joins and leaves ? — *without* having to install a full-on client such as VLC.

In case this sounds a strange request, I’m trying to perform some specific testing that needs m/casts being routed by the L2/3 switch — but I’m not interested in actually using the m/cast streams and the device I need to use is, while running Linux, quite .. er.. «computationally challenged» (headless embedded type system).

Re: Forcing an IGMP join

I bet you could write one if you knew a little bit of C.

Unfortunately, that’s the best I can do.

Re: Forcing an IGMP join

I’d thought about that but I was hoping to avoid that if there was a command line tool readily available. Not just through lazyness but also because that adds another set of tasks needed before I can get on with the actual m/cast tests I want to do.

And it’s been a while (years) since I did much with C.

Re: Forcing an IGMP join

There are also libraries for all the major scripting languages (Perl, Python, Ruby, TCL, etc.) which allow mangling of IP packets. If you know one of these, it might be easier to write one that way.

Re: Forcing an IGMP join

I might look in that direction — been a while since I did Perl also, but a good excuse to learn a bit of Ruby sounds fun!

However, having re-read the M/CAST docs & IGMP spec (I was on a long journey !) , I think it might be more difficult so possibly the longer C route might be a way to go — even with the re-learning curve.

Thanks all for the suggestions.

Re: Forcing an IGMP join

Is there a command-line means to get a Linux/Ubuntu client PC to send an IGMP joins and leaves ? — *without* having to install a full-on client such as VLC.

In case this sounds a strange request, I’m trying to perform some specific testing that needs m/casts being routed by the L2/3 switch — but I’m not interested in actually using the m/cast streams and the device I need to use is, while running Linux, quite .. er.. «computationally challenged» (headless embedded type system).

Just a guess on this, but have you looked at nemesis-igmp

Источник

Net-Labs.in

Network, ISP

Маршрутизация multicast в Linux

В современных реалиях, скорее всего, нет смысла заниматься маршрутизацией(репликацией) multicast-трафика с помощью софтроутеров(будь то ядро Linux или что-то иное). Причина довольно простая – даже дешёвые свитчи умеют L2/L3-multicast(хоть и с ограничениями по количеству групп/маршрутов, но всё же это делается в asic-ах). Однако, существует ряд задач, которые могут быть не решены в “железе” (нет поддержки со стороны ПО/невозможно реализовать ввиду возможностей asic), например ренамберинг(изменение destination ip), внесение случайных задержек(перемешивание), отправка multicast в тунель, резервирование источника по произвольному критерию.

Зачем может потребоваться ренамберинг мультикаст группы? Во-первых, маппинг IP-mac неодназначен(например, группам 238.1.1.1 и 239.1.1.1 соответствует один и тот же mac-адрес), что может вызвать проблемы в L2-сегменте. Конечно, такая коллизия маловероятна, однако возможна, когда вы берёте multicast из разных внешних источников(в принципе, IP-адреса могут даже полностью совпасть). Во-вторых, вы можете захотеть скрыть свой источник, изменив и destination и source адрес. В destination может быть “спрятан” номер AS источника(RFC3180), по source тоже можно догадаться об источнике, если он не серый. В третьих, перенумеровать группу может потребоваться для избежания коллизии на оборудовании, где заканчивается TCAM под multicast (как временное решение до замены оборудования/изменения схемы сети). И наконец, вы решили зарезервировать ТВ-каналы путём их получения из разных источников(т.е. вливать на сервер 2 разных группы(но одинаковых по контенту) и забирать из него одну, результирующую(работающую))

Внести случайную задержку(reordering) или потери может потребоваться для проверки поведения используемых STB/soft-плееров. Например, вам интересно, как будет выглядеть картинка, если где-то на сети мультикаст пройдёт через per-packet балансировку и не зависнет ли плеер при наличии потери пакетов, будут ли издаваться неприятные скрипящие звуки или же просто квадратики и тишина.

При написании этой заметки использовался Ubuntu Linux 14.04 LTS (ядро 3.13.0-24-generic), но применимо для большинства современных дистрибутивов, однако необходимо проверить поддержку IPv4 multicast в ядре:

В отличии от unicast роутинга, утилиты ip недостаточно даже для статической (S, G) маршрутизации, не говоря уже про динамическую. Для формирования таблицы мультикаст-репликации требуются “сторонние” userspace-утилиты. Например, для статических маршрутов это smcroute, для построения таблицы по igmp-запросам с даунлинк-интерфейсов – igmpproxy(маршрут добавляется в ядро тогда, когда “снизу” приходит igmp-запрос), для работы с PIM-SM сигнализацией – pimd(требуется поддержка PIMSM_V2 со стороны ядра).

Статическая маршрутизация multicast

Рассмотрим следующую задачу: осуществить репликацию мультикаст-трафика (*, 233.251.240.1) с интерфейса eth1 на интерфейсы eth2 и eth3, при этом на eth1 эта группа приходит только по igmpv2-запросу.

Конфигурация интерфейсов выглядет следующим образом:

К сожаленью, smcroute в ubuntu 14.04 не поддерживает (*, G)-форму, поэтому придётся скомпилировать из исходников:

Прежде чем запускать маршрутизацию(инсталлировать маршруты в ядро), нужно отключить RPF на интерфейсе eth1(поскольку по условию задачи считаем, что source ip multicast-группы может быть произвольным):

Кроме того, устанавливаем явным образом режим работы igmp на интерфейсе eth1:

Теперь переходим к конфигурации smcroute(/etc/smcroute.conf):

(необходим перевод строки в конце конфигурации)

Первая строчка – подключить группу по протоколу igmp(залить эту информацию в ядро), вторая – собственно мультикаст-маршрут.

Запускаем smcroute:

(логи пишутся в syslog)

Проверяем таблицу igmp:

Всё верно, 01F0FBE9 это наша группа 233.251.240.1.

До того, как в eth1 польётся мультикаст, таблица маршрутизации в ядре будет выглядеть таким образом:

Затем примет такой вид:

Проверяем работу репликации:

Ренамберинг мультикаст групп

Для того, чтобы изменить IP multicast группы используем DNAT. Например, мы хотим изменить 233.251.240.1 на 233.251.250.5:

/etc/smcroute.conf будет выглядеть следующим образом:

(в маршруте фигурирует новый адрес по причине того, что DNAT делается в PREROUTING-е)

Далее, чистим conntrack(командой “conntrack -F”) и проверяем:

Как нетрудно догадаться, чтобы подменить ещё и source ip, будем использовать SNAT:

Снова чистим conntrack(“conntrack -F”) и проверяем:

Прочее

С помощью iptables и tc можно ещё что-нибудь сделать с трафиком(внести задержки и потери, например). При желании, можно организовать резервирование ТВ-канала путём переключения источника вещания с помощью скриптов(smcroute-ом можно управлять “извне”), придумав произвольные критерии выбора лучшего источника(подобные решения для операторов существуют и стоят больших денег)

Источник

Protocol Independent Multicast — PIM

Protocol Independent Multicast (PIM) is a multicast control plane protocol that advertises multicast sources and receivers over a routed layer 3 network. Layer 3 multicast relies on PIM to advertise information about multicast capable routers, and the location of multicast senders and receivers. For this reason, multicast cannot be sent through a routed network without PIM.

PIM has two modes of operation: Sparse Mode (PIM-SM) and Dense Mode (PIM-DM).

Cumulus Linux supports only PIM Sparse Mode.

PIM Overview

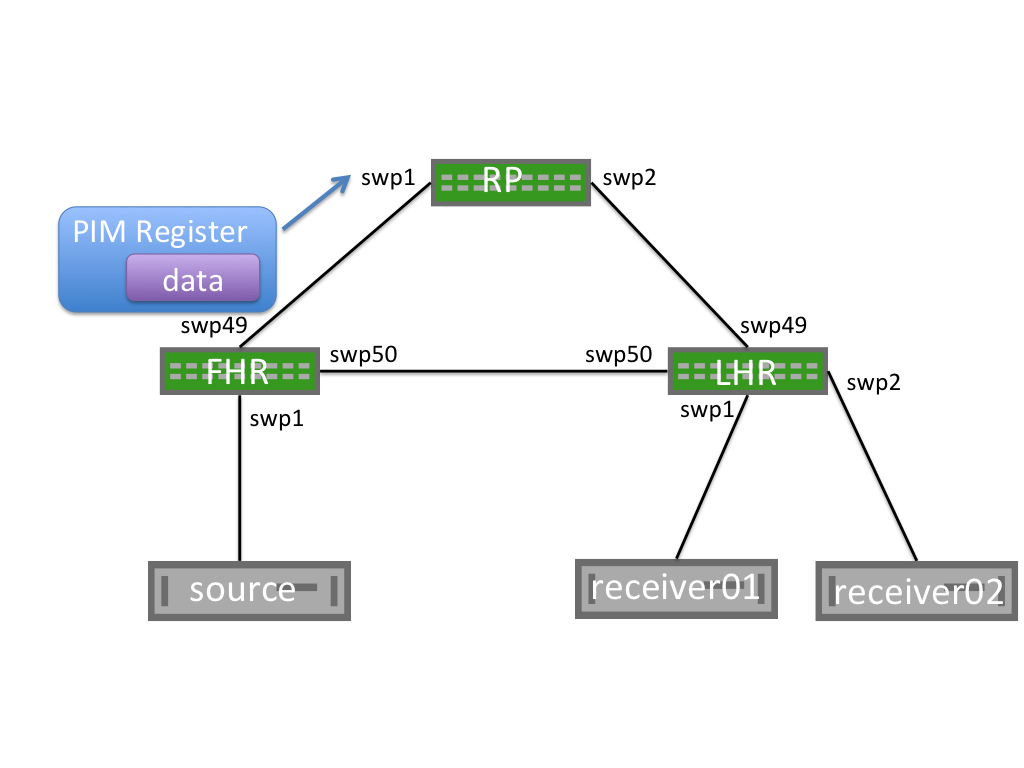

The following illustration shows a PIM configuration. The table below the illustration describes the network elements.

Network Element

- zebra does not resolve the next hop for the RP through the default route. To prevent multicast forwarding from failing, either provide a specific route to the RP or specify the following command to be able to resolve the next hop for the RP through the default route:

Note: PIM join/prune messages are sent to PIM neighbors on individual interfaces. Join/prune messages are never unicast.

This PIM join/prune is for group 239.1.1.9, with 1 join and 0 prunes for the group.

Join/prunes for multiple groups can exist in a single packet.

The following shows an S,G Prune example:

PIM Neighbors

When PIM is configured on an interface, PIM Hello messages are sent to the link local multicast group 224.0.0.13. Any other router configured with PIM on the segment that hears the PIM Hello messages builds a PIM neighbor with the sending device.

PIM neighbors are stateless. No confirmation of neighbor relationship is exchanged between PIM endpoints.

Configure PIM

To configure PIM, run the following commands:

Configure the PIM interfaces:

You must enable PIM on all interfaces facing multicast sources or multicast receivers, as well as on the interface where the RP address is configured.

In Cumulus Linux 4.0 and later the sm keyword is no longer required. In Cumulus Linux releases 3.7 and earlier, the correct command is net add interface swp1 pim sm .

Enable IGMP on all interfaces with hosts attached. IGMP version 3 is the default. Only specify the version if you exclusively want to use IGMP version 2. SSM requires the use of IGMP version 3.

You must configure IGMP on all interfaces where multicast receivers exist.

For ASM, configure a group mapping for a static RP:

Each PIM enabled device must configure a static RP to a group mapping and all PIM-SM enabled devices must have the same RP to group mapping configuration.

IP PIM RP group ranges can overlap. Cumulus Linux performs a longest prefix match (LPM) to determine the RP. In the following example, if the group is in 224.10.2.5, RP 192.168.0.2 is selected. If the group is in 224.10.15, RP 192.168.0.1 is selected:

PIM is included in the FRRouting package. For proper PIM operation, PIM depends on Zebra. PIM also relies on unicast routing to be configured and operational for RPF operations. You must configure a routing protocol or static routes.

Edit the /etc/frr/daemons file and add pimd=yes to the end of the file:

Restart FRR with this command:

Restarting FRR restarts all the routing protocol daemons that are enabled and running.

Restarting FRR impacts all routing protocols.

In the vtysh shell, run the following commands to configure the PIM interfaces:

PIM must be enabled on all interfaces facing multicast sources or multicast receivers, as well as on the interface where the RP address is configured.

In Cumulus Linux 4.0 and later, the sm keyword is no longer required.

Enable IGMP on all interfaces with hosts attached. IGMP version 3 is the default. Only specify the version if you exclusively want to use IGMP version 2.

You must configure IGMP on all interfaces where multicast receivers exist.

For ASM, configure a group mapping for a static RP:

Each PIM enabled device must configure a static RP to a group mapping and all PIM-SM enabled devices must have the same RP to group mapping configuration.

IP PIM RP group ranges can overlap. Cumulus Linux performs a longest prefix match (LPM) to determine the RP. In the following example, if the group is in 224.10.2.5, RP 192.168.0.2 is selected. If the group is in 224.10.15, RP 192.168.0.1 is selected:

PIM Sparse Mode (PIM-SM)

PIM Sparse Mode (PIM-SM) is a pull multicast distribution method; multicast traffic is only sent through the network if receivers explicitly ask for it. When a receiver pulls multicast traffic, the network must be periodically notified that the receiver wants to continue the multicast stream.

This behavior is in contrast to PIM Dense Mode (PIM-DM), where traffic is flooded, and the network must be periodically notified that the receiver wants to stop receiving the multicast stream.

PIM-SM has three configuration options:

- Any-source Mulitcast (ASM) is the traditional, and most commonly deployed PIM implementation. ASM relies on rendezvous points to connect multicast senders and receivers that then dynamically determine the shortest path through the network between source and receiver, to efficiently send multicast traffic.

- Bidirectional PIM (BiDir) forwards all traffic through the multicast rendezvous point (RP) instead of tracking multicast source IPs, allowing for greater scale while resulting in inefficient forwarding of network traffic.

- Source Specific Multicast (SSM) requires multicast receivers to know exactly from which source they want to receive multicast traffic instead of relying on multicast rendezvous points. SSM requires the use of IGMPv3 on the multicast clients.

Cumulus Linux only supports ASM and SSM. PIM BiDir is not currently supported.

Any-source Multicast Routing (ASM)

Multicast routing behaves differently depending on whether the source is sending before receivers request the multicast stream, or if a receiver tries to join a stream before there are any sources.

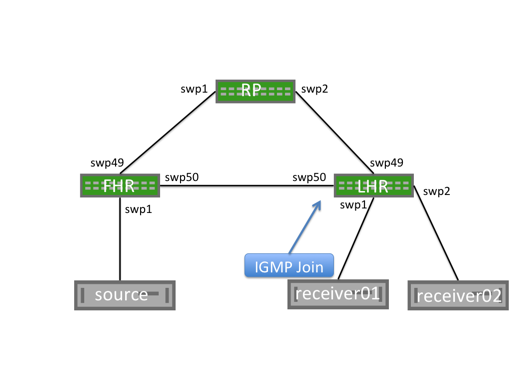

Receiver Joins First

When a receiver joins a group, an IGMP membership join message is sent to the IGMPv3 multicast group, 224.0.0.22. The PIM multicast router for the segment that is listening to the IGMPv3 group receives the IGMP membership join message and becomes an LHR for this group.

This creates a (*,G) mroute with an OIF of the interface on which the IGMP Membership Report is received and an IIF of the RPF interface for the RP.

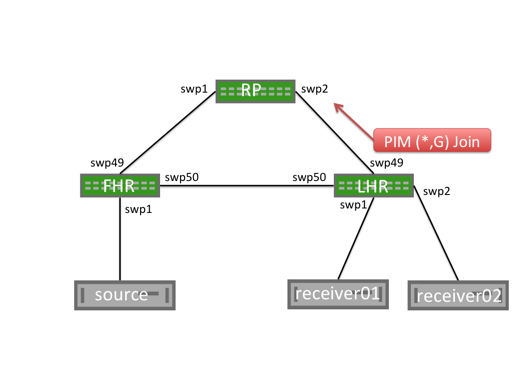

The LHR generates a PIM (*,G) join message and sends it from the interface towards the RP. Each multicast router between the LHR and the RP builds a (*,G) mroute with the OIF being the interface on which the PIM join message is received and an Incoming Interface of the reverse path forwarding interface for the RP.

When the RP receives the (*,G) Join message, it does not send any additional PIM join messages. The RP maintains a (*,G) state as long as the receiver wants to receive the multicast group.

Unlike multicast receivers, multicast sources do not send IGMP (or PIM) messages to the FHR. A multicast source begins sending, and the FHR receives the traffic and builds both a (*,G) and an (S,G) mroute. The FHR then begins the PIM register process.

PIM Register Process

When a first hop router (FHR) receives a multicast data packet from a source, the FHR does not know if there are any interested multicast receivers in the network. The FHR encapsulates the data packet in a unicast PIM register message. This packet is sourced from the FHR and destined to the RP address. The RP builds an (S,G) mroute, decapsulates the multicast packet, and forwards it along the (*,G) tree.

As the unencapsulated multicast packet travels down the (*,G) tree towards the interested receivers, at the same time, the RP sends a PIM (S,G) join towards the FHR. This builds an (S,G) state on each multicast router between the RP and FHR.

When the FHR receives a PIM (S,G) join, it continues encapsulating and sending PIM register messages, but also makes a copy of the packet and sends it along the (S,G) mroute.

The RP then receives the multicast packet along the (S,G) tree and sends a PIM register stop to the FHR to end the register process.

|  |

PIM SPT Switchover

When the LHR receives the first multicast packet, it sends a PIM (S,G) join towards the FHR to efficiently forward traffic through the network. This builds the shortest path tree (SPT), or the tree that is the shortest path to the source. When the traffic arrives over the SPT, a PIM (S,G) RPT prune is sent up the shared tree towards the RP. This removes multicast traffic from the shared tree; multicast data is only sent over the SPT.

You can configure SPT switchover on a per-group basis, allowing for some groups to never switch to a shortest path tree; this is also called SPT infinity. The LHR now sends both (*,G) joins and (S,G) RPT prune messages towards the RP.

To configure a group to never follow the SPT, create the necessary prefix-lists, then configure SPT switchover for the spt-range prefix-list:

To view the configured prefix-list, run the vtysh show ip mroute command or the NCLU net show mroute command. The following command shows that 235.0.0.0 is configured for SPT switchover, identified by pimreg.

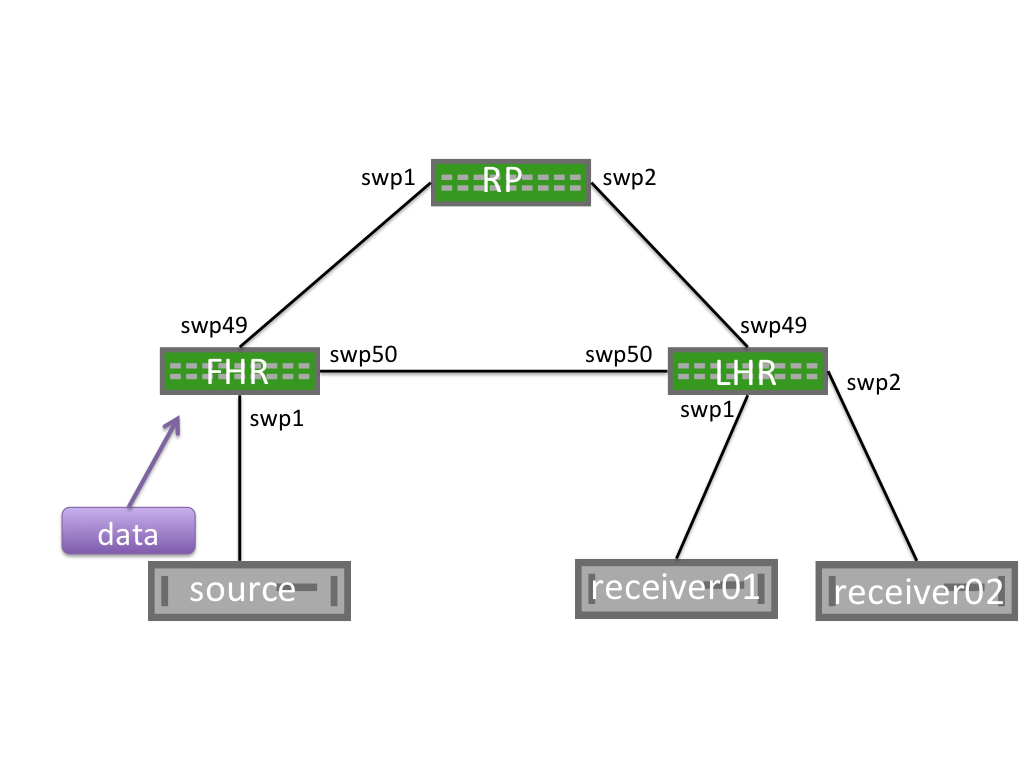

Sender Starts Before Receivers Join

A multicast sender can send multicast data without any additional IGMP or PIM signaling. When the FHR receives the multicast traffic, it encapsulates it and sends a PIM register to the rendezvous point (RP).

When the RP receives the PIM register, it builds an (S,G) mroute; however, there is no (*,G) mroute and no interested receivers.

The RP drops the PIM register message and immediately sends a PIM register stop message to the FHR.

Receiving a PIM register stop without any associated PIM joins leaves the FHR without any outgoing interfaces. The FHR drops this multicast traffic until a PIM join is received.

PIM register messages are sourced from the interface that receives the multicast traffic and are destined to the RP address. The PIM register is not sourced from the interface towards the RP.

PIM Null-Register

To notify the RP that multicast traffic is still flowing when the RP has no receiver, or if the RP is not on the SPT tree, the FHR periodically sends PIM null register messages. The FHR sends a PIM register with the Null-Register flag set, but without any data. This special PIM register notifies the RP that a multicast source is still sending, in case any new receivers come online.

After receiving a PIM Null-Register, the RP immediately sends a PIM register stop to acknowledge the reception of the PIM null register message.

Source Specific Multicast Mode (SSM)

The source-specific multicast method uses prefix lists to configure a receiver to only allow traffic to a multicast address from a single source. This removes the need for an RP, as the source must be known before traffic can be accepted. There is no additional PIM configuration required to enable SSM beyond enabling PIM and IGMPv3 on the relevant interfaces.

Receiver Joins First

When a receiver sends an IGMPv3 Join with the source defined the LHR builds an S,G entry and sends a PIM S,G join to the PIM neighbor closest to the source, according to the routing table.

The full path between LHR and FHR contains an S,G state, although no multicast traffic is flowing. Periodic IGMPv3 joins between the receiver and LHR, as well as PIM S,G joins between PIM neighbors, maintain this state until the receiver leaves.

When the sender begins, traffic immediately flows over the pre-built SPT from the sender to the receiver.

Sender Starts Before Receivers Join

In SSM when a sender begins sending, the FHR does not have any existing mroutes. The traffic is dropped and nothing further happens until a receiver joins. SSM does no rely on an RP; there is no PIM Register process.

Differences between Source Specific Multicast and Any Source Multicast

SSM differs from ASM multicast in the following ways:

- An RP is not configured or used. SSM does not require an RP since receivers always know the addresses of the senders.

- There is no *,G PIM Join message. The multicast sender is always known so the PIM Join messages used in SSM are always S,G Join messages.

- There is no Shared Tree or *,G tree. The PIM join message is always sent towards the source, building the SPT along the way. There is no shared tree or *,G state.

- IGMPv3 is required. ASM allows for receivers to specify only the group they want to join without knowledge of the sender. This can be done in both IGMPv2 and IGMPv3. Only IGMPv3 supports requesting a specific source for a multicast group (the sending an S,G IGMP join)

- No PIM Register process or SPT Switchover. Without a shared tree or RP, there is no need for the PIM register process. S,G joins are sent directly towards the FHR.

PIM Active-Active with MLAG

For a multicast sender or receiver to be supported over a dual-attached MLAG bond, you must configure pim active-active .

To configure PIM active-active with MLAG, run the following commands:

On the VLAN interface where multicast sources or receivers exist, configure pim active-active and igmp . For example:

Enabling PIM active-active automatically enables PIM on that interface.

Confirm PIM active-active is configured with the net show pim mlag summary command:

Configure ip pim active-active on the VLAN interface where the multicast source or receiver exists along with the required ip igmp command.

Enabling PIM active-active automatically enables PIM on that interface.

Confirm that PIM active-active is configured with the show ip pim mlag summary command:

Multicast Sender

When a multicast sender is attached to an MLAG bond, the sender hashes the outbound multicast traffic over a single member of the bond. Traffic is received on one of the MLAG enabled switches. Regardless of which switch receives the traffic, it is forwarded over the MLAG peer link to the other MLAG-enabled switch, because the peerlink is always considered a multicast router port and will always receive the multicast stream.

Traffic from multicast sources attached to an MLAG bond is always sent over the MLAG peerlink. Be sure to size the peerlink appropriately to accommodate this traffic.

The PIM DR for the VLAN where the source resides is responsible for sending the PIM register towards the RP. The PIM DR is the PIM speaker with the highest IP address on the segment. After the PIM register process is complete and traffic is flowing along the Shortest Path Tree (SPT), either MLAG switch will forward traffic towards the receivers.

Examples are provided below that show the flow of traffic between server02 and server03:

- Step 1: server02 sends traffic to leaf02. leaf02 forwards traffic to leaf01 because the peerlink is a multicast router port. leaf01 also receives a PIM register from leaf02. leaf02 syncs the *,G table from leaf01 as an MLAG active-active peer.

- Step 2: leaf02 has the *,G route indicating that traffic is to be forwarded toward spine01. Either leaf02 or leaf01 sends this traffic directly based on which MLAG switch receives it from the attached source. In this case, leaf02 receives the traffic on the MLAG bond and forwards it directly upstream.

| Step 1 | Step 2 |

|---|

To show the PIM DR, run the NCLU net show pim interface command or the vtysh show ip pim interface command. The following example shows that in Vlan12 the DR is 10.1.2.12.

PIM joins sent towards the source can be ECMP load shared by upstream PIM neighbors (spine01 and spine02 in the example above). Either MLAG member can receive the PIM join and forward traffic, regardless of DR status.

Multicast Receiver

A dual-attached multicast receiver sends an IGMP join on the attached VLAN. The specific interface that is used is determined based on the host. The IGMP join is received on one of the MLAG switches, and the IGMP join is added to the IGMP Join table and layer 2 MDB table. The layer 2 MDB table, like the unicast MAC address table, is synced via MLAG control messages over the peerlink. This allows both MLAG switches to program IGMP and MDB table forwarding information.

Both switches send *,G PIM Join messages towards the RP. If the source is already sending, both MLAG switches receive the multicast stream.

Traditionally, the PIM DR is the only node to send the PIM *,G Join, but to provide resiliency in case of failure, both MLAG switches send PIM *,G Joins towards the RP to receive the multicast stream.

To prevent duplicate multicast packets, a Designated Forward (DF) is elected. The DF is the primary member of the MLAG pair. As a result, the MLAG secondary puts the VLAN in the Outgoing Interface List (OIL), preventing duplicate multicast traffic.

Verify PIM

The following outputs are based on the Cumulus Reference Topology with cldemo-pim .

Source Starts First

On the FHR, an mroute is built, but the upstream state is Prune. The FHR flag is set on the interface receiving multicast. Run the NCLU net show commands to review detailed output for the FHR. For example:

On the RP, no mroute state is created, but the net show pim upstream output includes the Source and Group:

As a receiver joins the group, the mroute output interface on the FHR transitions from none to the RPF interface of the RP:

Receiver Joins First

On the LHR attached to the receiver:

On the RP

Source Starts First

On the FHR, an mroute is built, but the upstream state is Prune. The FHR flag is set on the interface receiving multicast.

Use the vtysh show ip commands to review detailed output for the FHR. For example:

On the RP, no mroute state is created, but the show ip pim upstream output includes the Source and Group:

As a receiver joins the group, the mroute output interface on the FHR transitions from none to the RPF interface of the RP:

Receiver Joins First

On the LHR attached to the receiver:

Additional PIM Features

Custom SSM multicast group ranges

PIM considers 232.0.0.0/8 the default SSM range. You can change the SSM range by defining a prefix-list and attaching it to the ssm-range command. You can change the default SSM group or add additional group ranges to be treated as SSM groups.

If you use the ssm-range command, all SSM ranges must be in the prefix-list, including 232.0.0.0/8 .

Create a prefix-list with the permit keyword to match address ranges that should be treated as SSM groups and deny keyword for those ranges which should not be treated as SSM enabled ranges.

Apply the custom prefix-list as an ssm-range

To view the configured prefix-lists, run the net show ip prefix-list command:

Create a prefix-list with the permit keyword to match address ranges that you want to treat as SSM groups and the deny keyword for the ranges you do not want to treat as SSM-enabled ranges:

Apply the custom prefix-list as an ssm-range :

To view the configured prefix-lists, run the show ip prefix-list my-custom-ssm-range command:

PIM and ECMP

PIM uses the RPF procedure to choose an upstream interface to build a forwarding state. If you configure equal-cost multipaths (ECMP), PIM chooses the RPF based on the ECMP hash algorithm.

Run the net add pim ecmp command to enable PIM to use all the available nexthops for the installation of mroutes. For example, if you have four-way ECMP, PIM spreads the S,G and *,G mroutes across the four different paths.

Run the ip pim ecmp rebalance command to recalculate all stream paths in the event of a loss of path over one of the ECMP paths. Without this command, only the streams that are using the path that is lost are moved to alternate ECMP paths. Rebalance does not affect existing groups.

The rebalance command might cause some packet loss.

Run the ip pim ecmp command to enable PIM to use all the available nexthops for the installation of mroutes. For example, if you have four-way ECMP, PIM spreads the S,G and *,G mroutes across the four different paths.

Run the ip pim ecmp rebalance command to recalculate all stream paths in the event of a loss of path over one of the ECMP paths. Without this command, only the streams that are using the path that is lost are moved to alternate ECMP paths. Rebalance does not affect existing groups.

The rebalance command might cause some packet loss.

To show which nexthop is selected for a specific source/group, run the show ip pim nexthop command from the vtysh shell:

IP Multicast Boundaries

Multicast boundaries enable you to limit the distribution of multicast traffic by setting boundaries with the goal of pushing multicast to a subset of the network.

With such boundaries in place, any incoming IGMP or PIM joins are dropped or accepted based upon the prefix-list specified. The boundary is implemented by applying an IP multicast boundary OIL (outgoing interface list) on an interface.

To configure the boundary, first create a prefix-list as described above, then run the following commands to configure the IP multicast boundary:

Multicast Source Discovery Protocol (MSDP)

You can use the Multicast Source Discovery Protocol (MSDP) to connect multiple PIM-SM multicast domains together, using the PIM-SM RPs. By configuring any cast RPs with the same IP address on multiple multicast switches (primarily on the loopback interface), the PIM-SM limitation of only one RP per multicast group is relaxed. This allows for an increase in both failover and load-balancing throughout.

When an RP discovers a new source (typically a PIM-SM register message), a source-active (SA) message is sent to each MSDP peer. The peer then determines if any receivers are interested.

Cumulus Linux MSDP support is primarily for anycast-RP configuration, rather than multiple multicast domains. You must configure each MSDP peer in a full mesh, as SA messages are not received and reforwarded.

Cumulus Linux currently only supports one MSDP mesh group.

The following steps demonstrate how to configure a Cumulus switch to use the MSDP:

Add an anycast IP address to the loopback interface for each RP in the domain:

On every multicast switch, configure the group to RP mapping using the anycast address:

Configure the MSDP mesh group for all active RPs (the following example uses 3 RPs):

The mesh group must include all RPs in the domain as members, with a unique address as the source. This configuration results in MSDP peerings between all RPs.

Pick the local loopback address as the source of the MSDP control packets:

Inject the anycast IP address into the IGP of the domain. If the network is unnumbered and uses unnumbered BGP as the IGP, avoid using the anycast IP address for establishing unicast or multicast peerings. For PIM-SM, ensure that the unique address is used as the PIM hello source by setting the source:

Edit the /etc/network/interfaces file to add an anycast IP address to the loopback interface for each RP in the domain. For example:

Run the ifreload -a command to load the new configuration:

On every multicast switch, configure the group to RP mapping using the anycast address:

Configure the MSDP mesh group for all active RPs (the following example uses 3 RPs):

The mesh group must include all RPs in the domain as members, with a unique address as the source. This configuration results in MSDP peerings between all RPs.

Pick the local loopback address as the source of the MSDP control packets

Inject the anycast IP address into the IGP of the domain. If the network is unnumbered and uses unnumbered BGP as the IGP, avoid using the anycast IP address for establishing unicast or multicast peerings. For PIM-SM, ensure that the unique address is used as the PIM hello source by setting the source:

PIM in a VRF

VRFs divide the routing table on a per-tenant basis, ultimately providing for separate layer 3 networks over a single layer 3 infrastructure. With a VRF, each tenant has its own virtualized layer 3 network, so IP addresses can overlap between tenants.

PIM in a VRF enables PIM trees and multicast data traffic to run inside a layer 3 virtualized network, with a separate tree per domain or tenant. Each VRF has its own multicast tree with its own RP(s), sources, and so on. Therefore, you can have one tenant per corporate division, client, or product; for example.

VRFs on different switches typically connect or are peered over subinterfaces, where each subinterface is in its own VRF, provided MP-BGP VPN is not enabled or supported.

To configure PIM in a VRF, run the following commands.

First, add the VRFs and associate them with switch ports:

Then add the PIM configuration to FRR, review and commit the changes:

First, edit the /etc/network/interfaces file and to the VRFs and associate them with switch ports, then run ifreload -a to reload the configuration.

Then add the PIM configuration to FRR. You can do this in vtysh:

To show VRF information, run the NCLU net show mroute vrf command or the vtysh show ip mroute vrf command:

BFD for PIM Neighbors

You can use bidirectional forward detection (BFD) for PIM neighbors to quickly detect link failures. When you configure an interface, include the pim bfd option. For example:

Troubleshooting

FHR Stuck in Registering Process

When a multicast source starts, the FHR sends unicast PIM register messages from the RPF interface towards the source. After the PIM register is received by the RP, a PIM register stop message is sent from the RP to the FHR to end the register process. If an issue occurs with this communication, the FHR becomes stuck in the registering process, which can result in high CPU, as PIM register packets are generated by the FHR CPU and sent to the RP CPU.

To assess this issue:

Review the FHR. The output interface of pimreg can be seen here. If this does not change to an interface within a few seconds, the FHR is likely stuck.

To troubleshoot the issue:

Validate that the FHR can reach the RP. If the RP and FHR can not communicate, the registration process fails:

On the RP, use tcpdump to see if the PIM register packets are arriving:

If PIM registration packets are being received, verify that they are seen by PIM by issuing debug pim packets from within FRRouting:

Repeat the process on the FHR to see if PIM register stop messages are being received on the FHR and passed to the PIM process:

No *,G Is Built on LHR

The most common reason for a *,G to not be built on an LHR is for if both PIM and IGMP are not enabled on an interface facing a receiver.

To troubleshoot this issue, if both PIM and IGMP are enabled, ensure that IGMPv3 joins are being sent by the receiver:

No mroute Created on FHR

To troubleshoot this issue:

Verify that multicast traffic is being received:

Verify that PIM is configured on the interface facing the source:

If PIM is configured, verify that the RPF interface for the source matches the interface on which the multicast traffic is received:

Verify that an RP is configured for the multicast group:

No S,G on RP for an Active Group

An RP does not build an mroute when there are no active receivers for a multicast group, even though the mroute was created on the FHR.

This is expected behavior. You can see the active source on the RP with either the NCLU net show pim upstream command or the vtysh show ip pim upstream command:

No mroute Entry Present in Hardware

Use the cl-resource-query command to verify that the hardware IP multicast entry is the maximum value:

You can also run the NCLU command equivalent: net show system asic | grep Mcast .

Verify MSDP Session State

To verify the state of MSDP sessions, run either the NCLU net show msdp mesh-group command or the vtysh show ip msdp mesh-group command:

View the Active Sources

To review the active sources learned locally (through PIM registers) and from MSDP peers, run either the NCLU net show msdp sa command or the vtysh show ip msdp sa command:

Источник