- Windows Server 2012: Установка типа сетевого подключения: частная/общедоступная сеть

- Team Network Interfaces in Windows Server 2012 R2

- Overview

- Teaming Modes

- Static Teaming

- Switch Independent

- Load Balancing Mode

- Address Hash

- Hyper-V Port

- Standby Adapter

- Hardware Requirements

- Creating a Team

- NIC Teaming Console

- Summary

- Network Recommendations for a Hyper-V Cluster in Windows Server 2012

- Overview of different network traffic types

- Management traffic

- Cluster traffic

- Live migration traffic

- Storage traffic

- Replica traffic

- Virtual machine access traffic

- How to isolate the network traffic on a Hyper-V cluster

- Isolate traffic on the management network

- Isolate traffic on the cluster network

- Isolate traffic on the live migration network

- Isolate traffic on the storage network

- Isolate traffic for replication

- NIC Teaming (LBFO) recommendations

- Quality of Service (QoS) recommendations

- Virtual machine queue (VMQ) recommendations

- Example of converged networking: routing traffic through one Hyper-V virtual switch

- Appendix: Encryption

- Cluster traffic

- Live migration traffic

- SMB traffic

- Replica traffic

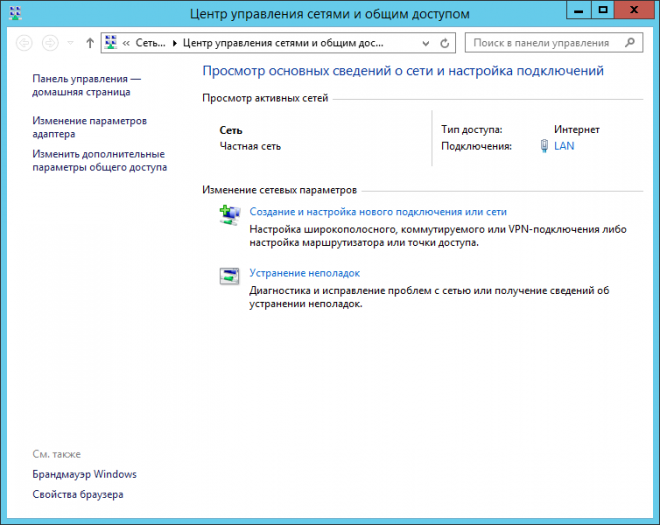

Windows Server 2012: Установка типа сетевого подключения: частная/общедоступная сеть

Впервые сетевые профили появились в Windows Vista / Windows Server 2008. Они являются частью брандмауэра Windows в режиме повышенной безопасности и служат для применения различных правил брандмауэра в зависимости от категории (типа) сети к которой осуществляется подключение. До появления Windows Server 2012 администраторы могли изменить категорию сетевого профиля в Центре управления сетями и общим доступом. В Windows Server 2012 эту возможность убрали, оставив лишь просмотр примененного профиля.

С помощью PowerShell в Windows Server 2012 можно просмотреть текущие сетевые профили и установить для каждого из них подходящую категорию: «частная сеть» или «общедоступная сеть».

Командлет Get-NetConnectionProfile отображает текущие профили для всех активных подключений:

PS C:\Users\Administrator> Get-NetConnectionProfile

Name : Network

InterfaceAlias: Ethernet

InterfaceIndex: 12

NetworkCatagory: Public

IPv4Connectivity: Internet

IPv6Connectivity: LocalNetwork

Командлет Set-NetConnectionProfile позволяет установить категорию сетевого профиля.

PS C:\Users\Administrator> Get-NetConnectionProfile | Set-NetConnectionProfile -NetworkCategory Private

По умолчанию в Windows Server 2012 для всех сетевых подключений устанавливается профиль «Общедоступная сеть». В случае присоединения сервера к домену профиль изменится автоматически. Если же сервер находится в рабочей группе, вам необходимо вручную указать категорию сетевого подключения.

Team Network Interfaces in Windows Server 2012 R2

Overview

There was a time when teaming network interfaces was done using software supplied by the NIC’s manufacturer. And if you were administering Mircosoft servers back then, you probably remember how truly awful some of the drivers and teaming software was.

With the release of Windows Server 2012, Microsoft has finally made this a native feature of the operating system. NIC teaming could not be more simple and reliable in the Microsoft server environment.

Teaming Modes

There are several different modes that can be used for the team. Understand the differences between each mode, as some may require advanced network hardware.

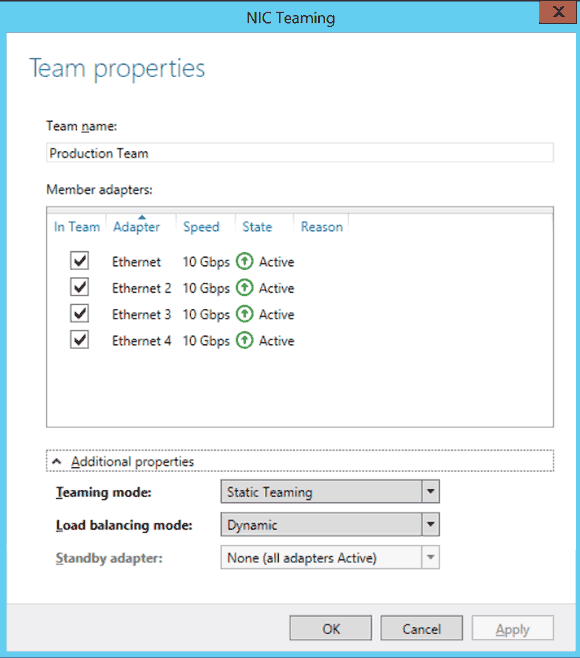

Static Teaming

Static teaming allows you to aggregate multiple interfaces into a single connection to provide simple fault tolerance or increased bandwidth. With this mode, you can create a 40Gb team by combining four 10Gb NICs. Each connection to the team, however, is limited to the speed of the NIC it connects to in the team. To reach the 40Gb maximum of the team, you would need at minimum four 10Gb client connections to the server.

Switch Independent

This mode can be used on any network. Unlike static teaming and LACP, you do not require a server class network switch. And unlike Static Teaming and LACP, each interface can connect to a different switch.

Link Aggregation Control Protocol, more formally known as 802.3ad, is a more advanced method of static teaming. LACP requires configuration on the switch the server is connected to. Each switch port the server’s NICs are connected to must be trunked (Ether Channeled) to create the aggregate connection. Like static teaming, each connection is limited to the speed of the NIC it connects to in the team.

Load Balancing Mode

This affects how your team balances traffic across each NIC. For the most part, unless you are running a Hyper-V server, Address Hash is what you will use.

Address Hash

Each packet is hashed, using the TCP port and IP addresses as a seed, to ensure returning packets from a client are recieved by a single team interface. This is what allows you to balance multiple network connections across your team’s interfaces to acheive the team’s total bandwidth.

Hyper-V Port

This mode is only used by Hyper-V servers. It distributes packets from virtual machines across all network interfaces by affinitizing each network port to a single team member interface.

Standby Adapter

This option allows you to define whether traffic is spread across all adapters in a team or to set one as standby. This is how we create fault tolerance for the team. When one network adapter fails, the standby then takes over for it.

Hardware Requirements

The advantage of using Microsoft’s builtin teaming software is that any NIC can be add to a team. With third-party or vendor supplied teaming software, you are locked into a single vendor and model.

It would be use to continue using the same NIC make and model and driver version in your team. This simply elimates difficult to troubleshoot problems down the road in a production environment. Identifying the problematic interface or driver would be a very daunting task to perform.

Creating a Team

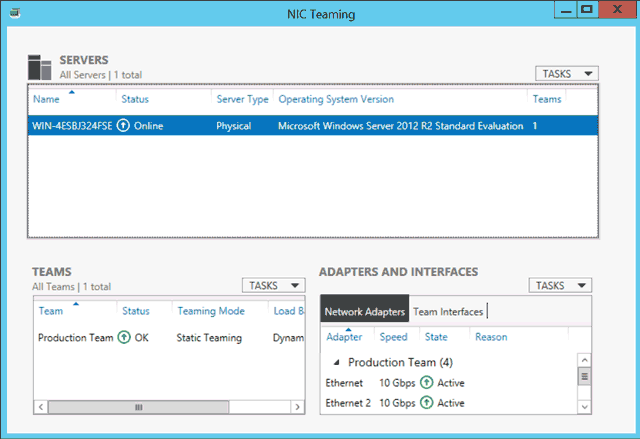

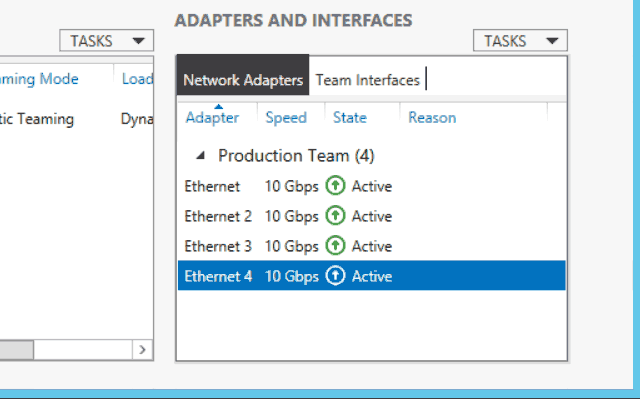

NIC Teaming Console

- Launch the Server Manager console.

- Click Local Server on the left sidebar of the console.

- Look for Teaming under Properties and click the status link, which will either be disabled or enabled. By default it will be disabled. This will launch the NIC Teaming console.

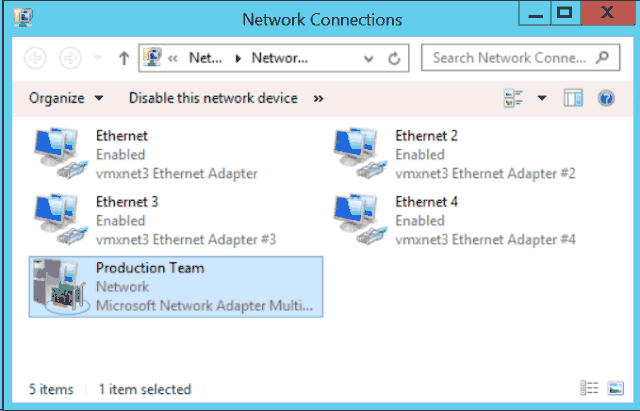

Open a Network Connections window to view the new team. It will have a very different icon than the physical adapters.

Right-click the team and select Status to verify the interface is configured properly.

Summary

Microsoft’s NIC teaming feature works very well and can safely be used in production. It is one feature that most administrators will agree was a much needed addition to the server operating system. No longer do you need to spend hours or days scouring vendors’ websites to find the lasted teaming software for your network hardware.

In my own environment I found configuring and managing the network teams a breeze. Everything just worked and the configurating screens are intiative enough for most to understand.

Network Recommendations for a Hyper-V Cluster in Windows Server 2012

Applies To: Windows Server 2012

There are several different types of network traffic that you must consider and plan for when you deploy a highly available Hyper-V solution. You should design your network configuration with the following goals in mind:

To ensure network quality of service

To provide network redundancy

To isolate traffic to defined networks

Where applicable, take advantage of Server Message Block (SMB) Multichannel

This topic provides network configuration recommendations that are specific to a Hyper-V cluster that is running Windows Server 2012. It includes an overview of the different network traffic types, recommendations for how to isolate traffic, recommendations for features such as NIC Teaming, Quality of Service (QoS) and Virtual Machine Queue (VMQ), and a Windows PowerShell script that shows an example of converged networking, where the network traffic on a Hyper-V cluster is routed through one external virtual switch.

Windows Server 2012 supports the concept of converged networking, where different types of network traffic share the same Ethernet network infrastructure. In previous versions of Windows Server, the typical recommendation for a failover cluster was to dedicate separate physical network adapters to different traffic types. Improvements in Windows Server 2012, such as Hyper-V QoS and the ability to add virtual network adapters to the management operating system enable you to consolidate the network traffic on fewer physical adapters. Combined with traffic isolation methods such as VLANs, you can isolate and control the network traffic.

If you use System Center Virtual Machine Manager (VMM) to create or manage Hyper-V clusters, you must use VMM to configure the network settings that are described in this topic.

In this topic:

Overview of different network traffic types

When you deploy a Hyper-V cluster, you must plan for several types of network traffic. The following table summarizes the different traffic types.

| Network Traffic Type | Description |

|---|---|

| Management | — Provides connectivity between the server that is running Hyper-V and basic infrastructure functionality. — Used to manage the Hyper-V management operating system and virtual machines. |

| Cluster | — Used for inter-node cluster communication such as the cluster heartbeat and Cluster Shared Volumes (CSV) redirection. |

| Live migration | — Used for virtual machine live migration. |

| Storage | — Used for SMB traffic or for iSCSI traffic. |

| Replica traffic | — Used for virtual machine replication through the Hyper-V Replica feature. |

| Virtual machine access | — Used for virtual machine connectivity. — Typically requires external network connectivity to service client requests. |

The following sections provide more detailed information about each network traffic type.

Management traffic

A management network provides connectivity between the operating system of the physical Hyper-V host (also known as the management operating system) and basic infrastructure functionality such as Active Directory Domain Services (AD DS), Domain Name System (DNS), and Windows Server Update Services (WSUS). It is also used for management of the server that is running Hyper-V and the virtual machines.

The management network must have connectivity between all required infrastructure, and to any location from which you want to manage the server.

Cluster traffic

A failover cluster monitors and communicates the cluster state between all members of the cluster. This communication is very important to maintain cluster health. If a cluster node does not communicate a regular health check (known as the cluster heartbeat), the cluster considers the node down and removes the node from cluster membership. The cluster then transfers the workload to another cluster node.

Inter-node cluster communication also includes traffic that is associated with CSV. For CSV, where all nodes of a cluster can access shared block-level storage simultaneously, the nodes in the cluster must communicate to orchestrate storage-related activities. Also, if a cluster node loses its direct connection to the underlying CSV storage, CSV has resiliency features which redirect the storage I/O over the network to another cluster node that can access the storage.

Live migration traffic

Live migration enables the transparent movement of running virtual machines from one Hyper-V host to another without a dropped network connection or perceived downtime.

We recommend that you use a dedicated network or VLAN for live migration traffic to ensure quality of service and for traffic isolation and security. Live migration traffic can saturate network links. This can cause other traffic to experience increased latency. The time it takes to fully migrate one or more virtual machines depends on the throughput of the live migration network. Therefore, you must ensure that you configure the appropriate quality of service for this traffic. To provide the best performance, live migration traffic is not encrypted.

You can designate multiple networks as live migration networks in a prioritized list. For example, you may have one migration network for cluster nodes in the same cluster that is fast (10 GB), and a second migration network for cross-cluster migrations that is slower (1 GB).

All Hyper-V hosts that can initiate or receive a live migration must have connectivity to a network that is configured to allow live migrations. Because live migration can occur between nodes in the same cluster, between nodes in different clusters, and between a cluster and a stand-alone Hyper-V host, make sure that all these servers can access a live migration-enabled network.

Storage traffic

For a virtual machine to be highly available, all members of the Hyper-V cluster must be able to access the virtual machine state. This includes the configuration state and the virtual hard disks. To meet this requirement, you must have shared storage.

In Windows Server 2012, there are two ways that you can provide shared storage:

Shared block storage. Shared block storage options include Fibre Channel, Fibre Channel over Ethernet (FCoE), iSCSI, and shared Serial Attached SCSI (SAS).

File-based storage. Remote file-based storage is provided through SMB 3.0.

SMB 3.0 includes new functionality known as SMB Multichannel. SMB Multichannel automatically detects and uses multiple network interfaces to deliver high performance and highly reliable storage connectivity.

By default, SMB Multichannel is enabled, and requires no additional configuration. You should use at least two network adapters of the same type and speed so that SMB Multichannel is in effect. Network adapters that support RDMA (Remote Direct Memory Access) are recommended but not required.

SMB 3.0 also automatically discovers and takes advantage of available hardware offloads, such as RDMA. A feature known as SMB Direct supports the use of network adapters that have RDMA capability. SMB Direct provides the best performance possible while also reducing file server and client overhead.

The NIC Teaming feature is incompatible with RDMA-capable network adapters. Therefore, if you intend to use the RDMA capabilities of the network adapter, do not team those adapters.

Both iSCSI and SMB use the network to connect the storage to cluster members. Because reliable storage connectivity and performance is very important for Hyper-V virtual machines, we recommend that you use multiple networks (physical or logical) to ensure that these requirements are achieved.

Replica traffic

Hyper-V Replica provides asynchronous replication of Hyper-V virtual machines between two hosting servers or Hyper-V clusters. Replica traffic occurs between the primary and Replica sites.

Hyper-V Replica automatically discovers and uses available network interfaces to transmit replication traffic. To throttle and control the replica traffic bandwidth, you can define QoS policies with minimum bandwidth weight.

If you use certificate-based authentication, Hyper-V Replica encrypts the traffic. If you use Kerberos-based authentication, traffic is not encrypted.

Virtual machine access traffic

Most virtual machines require some form of network or Internet connectivity. For example, workloads that are running on virtual machines typically require external network connectivity to service client requests. This can include tenant access in a hosted cloud implementation. Because multiple subclasses of traffic may exist, such as traffic that is internal to the datacenter and traffic that is external (for example to a computer outside the datacenter or to the Internet); one or more networks are required for these virtual machines to communicate.

To separate virtual machine traffic from the management operating system, we recommend that you use VLANs which are not exposed to the management operating system.

How to isolate the network traffic on a Hyper-V cluster

To provide the most consistent performance and functionality, and to improve network security, we recommend that you isolate the different types of network traffic.

Realize that if you want to have a physical or logical network that is dedicated to a specific traffic type, you must assign each physical or virtual network adapter to a unique subnet. For each cluster node, Failover Clustering recognizes only one IP address per subnet.

Isolate traffic on the management network

We recommend that you use a firewall or IPsec encryption, or both, to isolate management traffic. In addition, you can use auditing to ensure that only defined and allowed communication is transmitted through the management network.

Isolate traffic on the cluster network

To isolate inter-node cluster traffic, you can configure a network to either allow cluster network communication or not to allow cluster network communication. For a network that allows cluster network communication, you can also configure whether to allow clients to connect through the network. (This includes client and management operating system access.)

A failover cluster can use any network that allows cluster network communication for cluster monitoring, state communication, and for CSV-related communication.

To configure a network to allow or not to allow cluster network communication, you can use Failover Cluster Manager or Windows PowerShell. To use Failover Cluster Manager, click Networks in the navigation tree. In the Networks pane, right-click a network, and then click Properties.

Figure 1. Failover Cluster Manager network properties

The following Windows PowerShell example configures a network named Management Network to allow cluster and client connectivity.

The Role property has the following possible values.

| Value | Network Setting |

|---|---|

| 0 | Do not allow cluster network communication |

| 1 | Allow cluster network communication only |

| 3 | Allow cluster network communication and client connectivity |

The following table shows the recommended settings for each type of network traffic. Realize that virtual machine access traffic is not listed because these networks should be isolated from the management operating system by using VLANs that are not exposed to the host. Therefore, virtual machine networks should not appear in Failover Cluster Manager as cluster networks.

| Network Type | Recommended Setting |

|---|---|

| Management | Both of the following: — Allow cluster network communication on this network |

| Cluster | Allow cluster network communication on this network Note: Clear the Allow clients to connect through this network check box. |

| Live migration | Allow cluster network communication on this network Note: Clear the Allow clients to connect through this network check box. |

| Storage | Do not allow cluster network communication on this network |

| Replica traffic | Both of the following: — Allow cluster network communication on this network |

Isolate traffic on the live migration network

By default, live migration traffic uses the cluster network topology to discover available networks and to establish priority. However, you can manually configure live migration preferences to isolate live migration traffic to only the networks that you define. To do this, you can use Failover Cluster Manager or Windows PowerShell. To use Failover Cluster Manager, in the navigation tree, right-click Networks, and then click Live Migration Settings.

Figure 2. Live migration settings in Failover Cluster Manager

The following Windows PowerShell example enables live migration traffic only on a network that is named Migration_Network.

Isolate traffic on the storage network

To isolate SMB storage traffic, you can use Windows PowerShell to set SMB Multichannel constraints. SMB Multichannel constraints restrict SMB communication between a given file server and the Hyper-V host to one or more defined network interfaces.

For example, the following Windows PowerShell command sets a constraint for SMB traffic from the file server FileServer1 to the network interfaces SMB1, SMB2, SMB3, and SMB4 on the Hyper-V host from which you run this command.

To isolate iSCSI traffic, configure the iSCSI target with interfaces on a dedicated network (logical or physical). Use the corresponding interfaces on the cluster nodes when you configure the iSCSI initiator.

Isolate traffic for replication

To isolate Hyper-V Replica traffic, we recommend that you use a different subnet for the primary and Replica sites.

If you want to isolate the replica traffic to a particular network adapter, you can define a persistent static route which redirects the network traffic to the defined network adapter. To specify a static route, use the following command:

route add mask if -p

For example, to add a static route to the 10.1.17.0 network (example network of the Replica site) that uses a subnet mask of 255.255.255.0, a gateway of 10.0.17.1 (example IP address of the primary site), where the interface number for the adapter that you want to dedicate to replica traffic is 8, run the following command:

route add 10.1.17.1 mask 255.255.255.0 10.0.17.1 if 8 -p

NIC Teaming (LBFO) recommendations

We recommend that you team physical network adapters in the management operating system. This provides bandwidth aggregation and network traffic failover if a network hardware failure or outage occurs.

The NIC Teaming feature, also known as load balancing and failover (LBFO), provides two basic sets of algorithms for teaming.

Switch-dependent modes. Requires the switch to participate in the teaming process. Typically requires all the network adapters in the team to be connected to the same switch.

Switch-independent modes. Does not require the switch to participate in the teaming process. Although not required, team network adapters can be connected to different switches.

Both modes provide for bandwidth aggregation and traffic failover if a network adapter failure or network disconnection occurs. However, in most cases only switch-independent teaming provides traffic failover for a switch failure.

NIC Teaming also provides a traffic distribution algorithm that is optimized for Hyper-V workloads. This algorithm is referred to as the Hyper-V port load balancing mode. This mode distributes the traffic based on the MAC address of the virtual network adapters. The algorithm uses round robin as the load-balancing mechanism. For example, on a server that has two teamed physical network adapters and four virtual network adapters, the first and third virtual network adapter will use the first physical adapter, and the second and fourth virtual network adapter will use the second physical adapter. Hyper-V port mode also enables the use of hardware offloads such as virtual machine queue (VMQ) which reduces CPU overhead for networking operations.

Recommendations

For a clustered Hyper-V deployment, we recommend that you use the following settings when you configure the additional properties of a team.

| Property Name | Recommended Setting |

|---|---|

| Teaming mode | Switch Independent (the default setting) |

| Load balancing mode | Hyper-V Port |

NIC teaming will effectively disable the RDMA capability of the network adapters. If you want to use SMB Direct and the RDMA capability of the network adapters, you should not use NIC Teaming.

For more information about the NIC Teaming modes and how to configure NIC Teaming settings, see Windows Server 2012 NIC Teaming (LBFO) Deployment and Management and NIC Teaming Overview.

Quality of Service (QoS) recommendations

You can use QoS technologies that are available in Windows Server 2012 to meet the service requirements of a workload or an application. QoS provides the following:

Measures network bandwidth, detects changing network conditions (such as congestion or availability of bandwidth), and prioritizes — or throttles — network traffic.

Enables you to converge multiple types of network traffic on a single adapter.

Includes a minimum bandwidth feature which guarantees a certain amount of bandwidth to a given type of traffic.

We recommend that you configure appropriate Hyper-V QoS on the virtual switch to ensure that network requirements are met for all appropriate types of network traffic on the Hyper-V cluster.

You can use QoS to control outbound traffic, but not the inbound traffic. For example, with Hyper-V Replica, you can use QoS to control outbound traffic (from the primary server), but not the inbound traffic (from the Replica server).

Recommendations

For a Hyper-V cluster, we recommend that you configure Hyper-V QoS that applies to the virtual switch. When you configure QoS, do the following:

Configure minimum bandwidth in weight mode instead of in bits per second. Minimum bandwidth specified by weight is more flexible and it is compatible with other features, such as live migration and NIC Teaming. For more information, see the MinimumBandwidthMode parameter in New-VMSwitch.

Enable and configure QoS for all virtual network adapters. Assign a weight to all virtual adapters. For more information, see Set-VMNetworkAdapter. To make sure that all virtual adapters have a weight, configure the DefaultFlowMinimumBandwidthWeight parameter on the virtual switch to a reasonable value. For more information, see Set-VMSwitch.

The following table recommends some generic weight values. You can assign a value from 1 to 100. For guidelines to consider when you assign weight values, see Guidelines for using Minimum Bandwidth.

| Network Classification | Weight |

|---|---|

| Default weight | 0 |

| Virtual machine access | 1, 3 or 5 (low, medium and high-throughput virtual machines) |

| Cluster | 10 |

| Management | 10 |

| Replica traffic | 10 |

| Live migration | 40 |

| Storage | 40 |

Virtual machine queue (VMQ) recommendations

Virtual machine queue (VMQ) is a feature that is available to computers that have VMQ-capable network hardware. VMQ uses hardware packet filtering to deliver packet data from an external virtual network directly to virtual network adapters. This reduces the overhead of routing packets. When VMQ is enabled, a dedicated queue is established on the physical network adapter for each virtual network adapter that has requested a queue.

Not all physical network adapters support VMQ. Those that do support VMQ will have a fixed number of queues available, and the number will vary. To determine whether a network adapter supports VMQ, and how many queues they support, use the Get-NetAdapterVmq cmdlet.

You can assign virtual machine queues to any virtual network adapter. This includes virtual network adapters that are exposed to the management operating system. Queues are assigned according to a weight value, in a first-come first-serve manner. By default, all virtual adapters have a weight of 100.

Recommendations

We recommend that you increase the VMQ weight for interfaces with heavy inbound traffic, such as storage and live migration networks. To do this, use the Set-VMNetworkAdapter Windows PowerShell cmdlet.

Example of converged networking: routing traffic through one Hyper-V virtual switch

The following Windows PowerShell script shows an example of how you can route traffic on a Hyper-V cluster through one Hyper-V external virtual switch. The example uses two physical 10 GB network adapters that are teamed by using the NIC Teaming feature. The script configures a Hyper-V cluster node with a management interface, a live migration interface, a cluster interface, and four SMB interfaces. After the script, there is more information about how to add an interface for Hyper-V Replica traffic. The following diagram shows the example network configuration.

Figure 3. Example Hyper-V cluster network configuration

The example also configures network isolation which restricts cluster traffic from the management interface, restricts SMB traffic to the SMB interfaces, and restricts live migration traffic to the live migration interface.

Hyper-V Replica considerations

If you also use Hyper-V Replica in your environment, you can add another virtual network adapter to the management operating system for replica traffic. For example:

If you are instead using policy-based QoS, where you can throttle outgoing traffic regardless of the interface on which it is sent, you can use either of the following methods to throttle Hyper-V Replica traffic: Create a QoS policy that is based on the destination port. In the following example, the network listener on the Replica server or cluster has been configured to use port 8080 to receive replication traffic.

Appendix: Encryption

Cluster traffic

By default, cluster communication is not encrypted. You can enable encryption if you want. However, realize that there is performance overhead that is associated with encryption. To enable encryption, you can use the following Windows PowerShell command to set the security level for the cluster.

The following table shows the different security level values.

| Security Description | Value |

|---|---|

| Clear text | 0 |

| Signed (default) | 1 |

| Encrypted | 2 |

Live migration traffic

Live migration traffic is not encrypted. You can enable IPsec or other network layer encryption technologies if you want. However, realize that encryption technologies typically affect performance.

SMB traffic

By default, SMB traffic is not encrypted. Therefore, we recommend that you use a dedicated network (physical or logical) or use encryption. For SMB traffic, you can use SMB encryption, layer-2 or layer-3 encryption. SMB encryption is the preferred method.

Replica traffic

If you use Kerberos-based authentication, Hyper-V Replica traffic is not encrypted. We strongly recommend that you encrypt replication traffic that transits public networks over the WAN or the Internet. We recommend Secure Sockets Layer (SSL) encryption as the encryption method. You can also use IPsec. However, realize that using IPsec may significantly affect performance.

.jpeg)

.jpeg)

.jpeg)