- bash script read all the files in directory

- 3 Answers 3

- Not the answer you’re looking for? Browse other questions tagged linux bash or ask your own question.

- Linked

- Related

- Hot Network Questions

- Subscribe to RSS

- Get a list of all files in folder and sub-folder in a file

- 7 Answers 7

- How to list all files in a directory with absolute paths

- 10 Answers 10

- Recursively list all files in a directory including files in symlink directories

- 8 Answers 8

- Not the answer you’re looking for? Browse other questions tagged linux or ask your own question.

- Linked

- Related

- Hot Network Questions

- Subscribe to RSS

- Execute command on all files in a directory

- 10 Answers 10

bash script read all the files in directory

How do I loop through a directory? I know there is for f in /var/files;do echo $f;done; The problem with that is it will spit out all the files inside the directory all at once. I want to go one by one and be able to do something with the $f variable. I think the while loop would be best suited for that but I cannot figure out how to actually write the while loop.

Any help would be appreciated.

3 Answers 3

A simple loop should be working:

To write it with a while loop you can do:

The primary disadvantage of this is that cmd is run in a subshell, which causes some difficulty if you are trying to set variables. The main advantages are that the shell does not need to load all of the filenames into memory, and there is no globbing. When you have a lot of files in the directory, those advantages are important (that’s why I use -f on ls; in a large directory ls itself can take several tens of seconds to run and -f speeds that up appreciably. In such cases ‘for file in /var/*’ will likely fail with a glob error.)

You can go without the loop:

Not the answer you’re looking for? Browse other questions tagged linux bash or ask your own question.

Linked

Related

Hot Network Questions

Subscribe to RSS

To subscribe to this RSS feed, copy and paste this URL into your RSS reader.

site design / logo © 2021 Stack Exchange Inc; user contributions licensed under cc by-sa. rev 2021.10.8.40416

By clicking “Accept all cookies”, you agree Stack Exchange can store cookies on your device and disclose information in accordance with our Cookie Policy.

Источник

Get a list of all files in folder and sub-folder in a file

How do I get a list of all files in a folder, including all the files within all the subfolders and put the output in a file?

7 Answers 7

You can do this on command line, using the -R switch (recursive) and then piping the output to a file thus:

this will make a file called filename1 in the current directory, containing a full directory listing of the current directory and all of the sub-directories under it.

You can list directories other than the current one by specifying the full path eg:

will list everything in and under /var and put the results in a file in the current directory called filename2. This works on directories owned by another user including root as long as you have read access for the directories.

You can also list directories you don’t have access to such as /root with the use of the sudo command. eg:

Would list everything in /root, putting the results in a file called filename3 in the current directory. Since most Ubuntu systems have nothing in this directory filename3 will not contain anything, but it would work if it did.

Just use the find command with the directory name. For example to see the files and all files within folders in your home directory, use

Check the find manual manpage for the find command

Also check find GNU info page by using info find command in a terminal.

An alternative to recursive ls is the command line tool tree that comes with quite a lot of options to customize the format of the output diplayed. See the manpage for tree for all options.

will give you the same as tree using other characters for the lines.

to display hidden files too

to not display lines

- Go to the folder you want to get a content list from.

- Select the files you want in your list ( Ctrl + A if you want the entire folder).

- Copy the content with Ctrl + C .

- Open gedit and paste the content using Ctrl + V . It will be pasted as a list and you can then save the file.

This method will not include subfolder, content though.

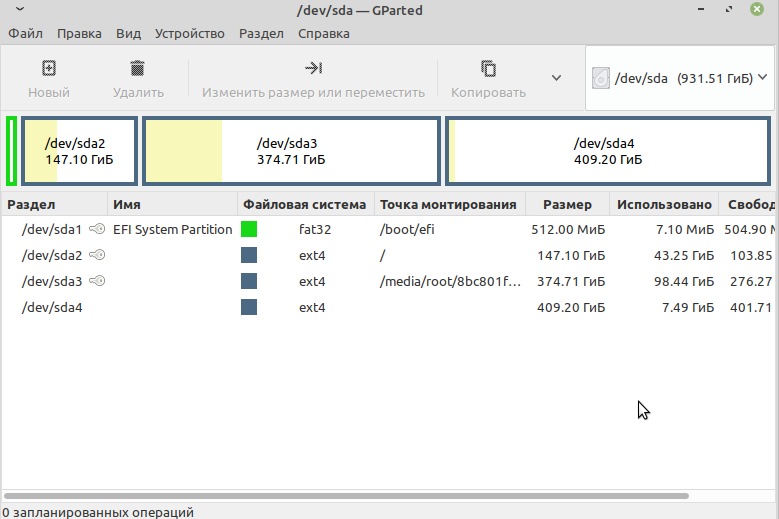

You could also use the GUI counterpart to Takkat’s tree suggestion which is Baobab. It is used to view folders and subfolders, often for the purpose of analysing disk usage. You may have it installed already if you are using a GNOME desktop (it is often called disk usage analyser).

You can select a folder and also view all its subfolders, while also getting the sizes of the folders and their contents as the screenshot below shows. You just click the small down arrow to view a subfolder within a folder. It is very useful for gaining a quick insight into what you’ve got in your folders and can produce viewable lists, but at the present moment it cannot export them to file. It has been requested as a feature, however, at Launchpad. You can even use it to view the root filesystem if you use gksudo baobab .

(You can also get a list of files with their sizes by using ls -shR

Источник

How to list all files in a directory with absolute paths

I need a file (preferably a .list file) which contains the absolute path of every file in a directory.

Example dir1: file1.txt file2.txt file3.txt

How can I accomplish this in linux/mac?

10 Answers 10

You can use find. Assuming that you want only regular files, you can do:

You can adjust the type parameter as appropriate if you want other types of files.

It’s the shell that computes the list of (non-hidden) files in the directory and passes the list to ls . ls just prints that list here, so you could as well do:

Note that it doesn’t include hidden files, includes files of any type (including directories) and if there’s no non-hidden file in the directory, in POSIX/csh/rc shells, you’d get /current/wd/* as output. Also, since the newline character is as valid as any in a file path, if you separate the file paths with newline characters, you won’t be able to use that resulting file to get back to the list of file reliably.

With the zsh shell, you could do instead:

- -rC1 prints r aw on 1 C olumn.

- -N , output records are NUL-delimited instead of newline-delimited (lines) as NUL is the only character that can’t be found in a file name.

- N : expands to nothing if there’s no matching file ( nullglob )

- D : include hidden files ( dotglob ).

- -. : include only regular files ( . ) after symlink resolution ( — ).

Then, you’d be able to do something like:

To remove those files for instance.

You could also use the 😛 modifier in the glob qualifiers to get the equivalent of realpath() on the files expanded from the globs (gets a full path exempt of any symlink component):

To see just regular files —

Another way with tree , not mentioned here, it goes recursively and unlike find or ls you don’t have any errors (like: Permission denied , Not a directory ) you also get the absolute path in case you want to feed the files to xargs or other command

the options meaning:

To install tree :

sudo apt install tree on Ubuntu/Debian

sudo yum install tree on CentOS/Fedora

sudo zypper install tree on OpenSUSE

You can just use realpath or readlink this naughty way:

When ls prints to a TTY it formats the file names in columns, but when it’s writing to a file, pipe, or other non-TTY it behaves like ls -1 and prints one file name per line. You can check this by running ls | cat in place of ls . [1]

- xargs build and execute command lines from standard input.

- realpath : return the canonicalized absolute pathname

- readlink : read value of a symbolic link

- Use realpath — to make it treat everything that follows as parameters instead of options, if files could have » -something «.

- If some files have spaces you could:

In a past Linux environment, I had a resolve command that would standardize paths, including making a relative path into an absolute path. I can’t find it now, so maybe it was written by someone in that organization.

You can make your own script using functions in the Python or Perl standard libraries (and probably other languages too).

Then, you would solve your problem with:

With this command, you can also do things like this:

Recursive files can be listed by many ways in linus. Here i am sharing one liner script to clear all logs of files(only files) from /var/log/ directory and second check recently which logs file has made an entry.

note: for directory location you can also pass $PWD instead of /var/log.

To list the full path of all commands (apps/programs) accessible to the user. (revised to address most, but not all limitations outlined in the comments)

NOTE

The PATH variable would normally have a colon ( : ) either at the beginning or at the end, but not both. A colon at the beginning or end signifies to search the current directory as well. Standard practice is for it to be at the end so as to never override standard utility programs. The sed substitutions here handle either case.

Explanation.

- eval

Frankly, I don’t know why eval is needed here, but it is. Without it, I get.

ls: cannot access ‘./<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/home/alpha/bin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/usr/local/sbin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/usr/local/bin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/usr/sbin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/usr/bin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/sbin/<.,>[[:word:]]‘: No such file or directory

ls: cannot access ‘/bin/<.,>[[:word:]]‘: No such file ordirectory

Источник

Recursively list all files in a directory including files in symlink directories

Suppose I have a directory /dir inside which there are 3 symlinks to other directories /dir/dir11 , /dir/dir12 , and /dir/dir13 . I want to list all the files in dir including the ones in dir11 , dir12 and dir13 .

To be more generic, I want to list all files including the ones in the directories which are symlinks. find . , ls -R , etc stop at the symlink without navigating into them to list further.

8 Answers 8

The -L option to ls will accomplish what you want. It dereferences symbolic links.

So your command would be:

You can also accomplish this with

The -follow option directs find to follow symbolic links to directories.

On Mac OS X use

as -follow has been deprecated.

How about tree? tree -l will follow symlinks.

Disclaimer: I wrote this package.

-type f means it will display real files (not symlinks)

-follow means it will follow your directory symlinks

-print will cause it to display the filenames.

If you want a ls type display, you can do the following

From the find manpage:

If you find you want to only follow a few symbolic links (like maybe just the toplevel ones you mentioned), you should look at the -H option, which only follows symlinks that you pass to it on the commandline.

I knew tree was an appropriate, but I didn’t have tree installed. So, I got a pretty close alternate here

properties of the file to which the link points, not from the link itself (unless it is a broken symbolic link or find is unable to examine the file to which the link points). Use of this option implies -noleaf. If you later use the -P option, -noleaf will still be in effect. If -L is in effect and find discovers a symbolic link to a subdirectory during its search, the subdirectory pointed to by the symbolic link will be searched.

-L dereferences symbolic links. This will also make it impossible to see any symlinks to files, though — they’ll look like the pointed-to file.

in case you would like to print all file contents: find . -type f -exec cat <> +

Not the answer you’re looking for? Browse other questions tagged linux or ask your own question.

Linked

Related

Hot Network Questions

Subscribe to RSS

To subscribe to this RSS feed, copy and paste this URL into your RSS reader.

site design / logo © 2021 Stack Exchange Inc; user contributions licensed under cc by-sa. rev 2021.10.8.40416

By clicking “Accept all cookies”, you agree Stack Exchange can store cookies on your device and disclose information in accordance with our Cookie Policy.

Источник

Execute command on all files in a directory

Could somebody please provide the code to do the following: Assume there is a directory of files, all of which need to be run through a program. The program outputs the results to standard out. I need a script that will go into a directory, execute the command on each file, and concat the output into one big output file.

For instance, to run the command on 1 file:

10 Answers 10

The following bash code will pass $file to command where $file will represent every file in /dir

- -maxdepth 1 argument prevents find from recursively descending into any subdirectories. (If you want such nested directories to get processed, you can omit this.)

- -type -f specifies that only plain files will be processed.

- -exec cmd option <> tells it to run cmd with the specified option for each file found, with the filename substituted for <>

- \; denotes the end of the command.

- Finally, the output from all the individual cmd executions is redirected to results.out

However, if you care about the order in which the files are processed, you might be better off writing a loop. I think find processes the files in inode order (though I could be wrong about that), which may not be what you want.

I’m doing this on my raspberry pi from the command line by running:

The accepted/high-voted answers are great, but they are lacking a few nitty-gritty details. This post covers the cases on how to better handle when the shell path-name expansion (glob) fails, when filenames contain embedded newlines/dash symbols and moving the command output re-direction out of the for-loop when writing the results to a file.

When running the shell glob expansion using * there is a possibility for the expansion to fail if there are no files present in the directory and an un-expanded glob string will be passed to the command to be run, which could have undesirable results. The bash shell provides an extended shell option for this using nullglob . So the loop basically becomes as follows inside the directory containing your files

This lets you safely exit the for loop when the expression ./* doesn’t return any files (if the directory is empty)

or in a POSIX compliant way ( nullglob is bash specific)

This lets you go inside the loop when the expression fails for once and the condition [ -f «$file» ] check if the un-expanded string ./* is a valid filename in that directory, which wouldn’t be. So on this condition failure, using continue we resume back to the for loop which won’t run subsequently.

Also note the usage of — just before passing the file name argument. This is needed because as noted previously, the shell filenames can contain dashes anywhere in the filename. Some of the shell commands interpret that and treat them as a command option when the name are not quoted properly and executes the command thinking if the flag is provided.

The — signals the end of command line options in that case which means, the command shouldn’t parse any strings beyond this point as command flags but only as filenames.

Double-quoting the filenames properly solves the cases when the names contain glob characters or white-spaces. But *nix filenames can also contain newlines in them. So we de-limit filenames with the only character that cannot be part of a valid filename — the null byte ( \0 ). Since bash internally uses C style strings in which the null bytes are used to indicate the end of string, it is the right candidate for this.

So using the printf option of shell to delimit files with this NULL byte using the -d option of read command, we can do below

The nullglob and the printf are wrapped around (..) which means they are basically run in a sub-shell (child shell), because to avoid the nullglob option to reflect on the parent shell, once the command exits. The -d » option of read command is not POSIX compliant, so needs a bash shell for this to be done. Using find command this can be done as

For find implementations that don’t support -print0 (other than the GNU and the FreeBSD implementations), this can be emulated using printf

Another important fix is to move the re-direction out of the for-loop to reduce a high number of file I/O. When used inside the loop, the shell has to execute system-calls twice for each iteration of the for-loop, once for opening and once for closing the file descriptor associated with the file. This will become a bottle-neck on your performance for running large iterations. Recommended suggestion would be to move it outside the loop.

Extending the above code with this fixes, you could do

which will basically put the contents of your command for each iteration of your file input to stdout and when the loop ends, open the target file once for writing the contents of the stdout and saving it. The equivalent find version of the same would be

Источник